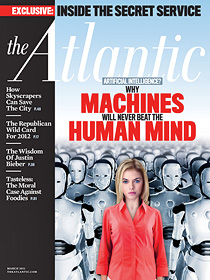

Is the Atlantic becoming the National Enquirer? This month, the cover blares, “Why Machines Will Never Beat the Human Mind,” which sounded pretty fishy to me. But potentially interesting! So I read the cover story, which turns out to be a fairly modest piece about a guy who took part in this year’s Loebner Prize competition, which is designed to find a computer that  can carry on a five-minute conversation so realistic that a panel of judges (or 30% of them, anyway) think they’re actually talking to a human being.

can carry on a five-minute conversation so realistic that a panel of judges (or 30% of them, anyway) think they’re actually talking to a human being.

OK fine. So far no computer program has ever won, and last year the computers lost yet again. But why does this mean machines will never beat the human mind? Brian Christian is the author, and after 8,000 words of saying nothing at all on this subject, he finally says this:

When the world-champion chess player Garry Kasparov defeated Deep Blue, rather convincingly, in their first encounter in 1996, he and IBM readily agreed to return the next year for a rematch. When Deep Blue beat Kasparov (rather less convincingly) in ’97, Kasparov proposed another rematch for ’98, but IBM would have none of it. The company dismantled Deep Blue, which never played chess again.

The apparent implication is that—because technological evolution seems to occur so much faster than biological evolution (measured in years rather than millennia)—once the Homo sapiens species is overtaken, it won’t be able to catch up. Simply put: the Turing Test, once passed, is passed forever. I don’t buy it.

Rather, IBM’s odd anxiousness to get out of Dodge after the ’97 match suggests a kind of insecurity on its part that I think proves my point. The fact is, the human race got to where it is by being the most adaptive, flexible, innovative, and quick-learning species on the planet. We’re not going to take defeat lying down.

No, I think that, while the first year that computers pass the Turing Test will certainly be a historic one, it will not mark the end of the story. Indeed, the next year’s Turing Test will truly be the one to watch—the one where we humans, knocked to the canvas, must pull ourselves up; the one where we learn how to be better friends, artists, teachers, parents, lovers; the one where we come back. More human than ever.

Seriously? That’s it? That’s what the cover headline is based on? Surely a better answer is that IBM built Deep Blue solely for its PR value, and once IBM won they had gotten all the PR out of it that they ever would. Win or lose, there was no point in continuing.

Plus there’s the fact that computers have gotten better since 1997. Hell, there are mobile phones that play grandmaster-level chess these days.

What a letdown. I was hoping the piece would actually have something interesting to say about AI, but it didn’t. This is especially disappointing because I’m so thoroughly convinced that human-level AI is not just possible, but inevitable. I figure all we need is hardware about a million times more powerful than we have now (current hardware doesn’t allow us to come anywhere close to human-level AI because current hardware has about the same processing power as an earthworm) plus another few decades of software development. But I might be wrong! And I’d like to hear a good argument from anyone who thinks I am.

I often think that the reason I’m so bullish on artificial intelligence isn’t because I have such a high regard for AI, but because I have such a low regard for biologic intelligence. Christian argues that computers are good at analytic, left-brain stuff, but humans will fight back with our awesomely passionate right-brain abilities (i.e., we’ll “learn how to be better friends, artists, teachers, parents, lovers.”) But you know what? I’ve watched The Bachelor, and it mostly proves that love can be cynically engineered just as well as any suspension bridge.1 Human emotions, as near as I can tell, are just as much the result of prosaic neurochemical reactions as anything else, and there’s really no reason to think that computers won’t eventually be able to emulate them just as well as they emulate anything else. Maybe better. After all, it’s not emotion that separates us from the rest of the animal kingdom. Human behavior just isn’t as deep as we like to think.

But like I said, maybe I’m wrong. Christian’s piece sure didn’t make much of a stab at persuading me, though.

1I’m serious about this, which makes me sort of gobsmacked at the show’s popularity. Don’t viewers realize that the real moral of the show is that you can pretty much guarantee to produce — real! honest! heartfelt! — love if you simply follow a fairly simple set of cookbook steps? Why would anyone want to have that lesson pounded home season after season?