A pair of MIT economists, Erik Brynjolfsson and Andrew McAfee, have written a new book suggesting that computers are finally getting smart enough to do jobs that only people could do in the past. Nothing new there. But they’ve joined a (still small) but growing number of observers who are afraid that the jobs being displaced are being displaced for good:

Faster, cheaper computers and increasingly clever software, the authors say, are giving machines capabilities that were once thought to be distinctively human, like understanding speech, translating from one language to another and recognizing patterns. So automation is rapidly moving beyond factories to jobs in call centers, marketing and sales—parts of the services sector, which provides most jobs in the economy.

During the last recession, the authors write, one in 12 people in sales lost their jobs, for example. And the downturn prompted many businesses to look harder at substituting technology for people, if possible. Since the end of the recession in June 2009, they note, corporate spending on equipment and software has increased by 26 percent, while payrolls have been flat.

…Productivity growth in the last decade, at more than 2.5 percent, they observe, is higher than the 1970s, 1980s and even edges out the 1990s. Still the economy, they write, did not add to its total job count, the first time that has happened over a decade since the Depression.

In the same way that investors get giddy when economic booms have lasted a long time (this time is different!), there’s always a danger of getting too pessimistic when an economic downturn lasts a long time. Just because this  recession is a deep one doesn’t necessarily mean that it has brand new causes or that it’s never going to end.

recession is a deep one doesn’t necessarily mean that it has brand new causes or that it’s never going to end.

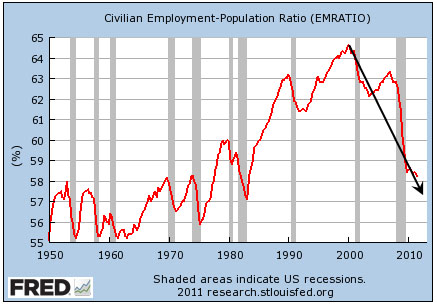

That said, take a look at the chart on the right. You’ve probably seen it dozens of times: It shows the percentage of people in the United States who are employed. Here’s the important thing about it: It didn’t peak in 2007 and then plummet during the Great Recession. It peaked in 2000, and it’s been dropping ever since. Even the huge housing/credit bubble of the aughts was only able to hold it at bay slightly.

In other words, something happened around 2000 that pushed people out of the labor force. There are lots of possible culprits, so it’s wise not to get too invested in a single explanation. Still, I’d say that 2000 is also about the time that computers seriously started to do human jobs. Just a little bit at first, and then more and more. This trend was masked a bit by the high fever we ran in 2003-07, but when the fever broke we compressed seven or eight years of decline into two.

My back-of-the-envelope guess has always been that job losses during the Great Recession have been about one-third structural and two-thirds cyclical. The cyclical part we could address with fiscal and monetary policy if our political leaders had the guts to do it. But I suspect that at least some of the explanation for the structural part is the growing sophistication of computers, and it’s not clear what we can (or want to) do about that. Computers can’t drive cars or trucks yet, but that day isn’t far away. And when it comes, I still wonder what all those drivers are going to do.