This is a pretty amazing story from Wired reporter Adrian Chen about the army of workers who spend their days monitoring the raw feeds of social networking sites to get rid of “dick pics, thong shots, exotic objects inserted into  bodies, hateful taunts, and requests for oral sex” before they show up on America’s morning skim of Facebook and Twitter:

bodies, hateful taunts, and requests for oral sex” before they show up on America’s morning skim of Facebook and Twitter:

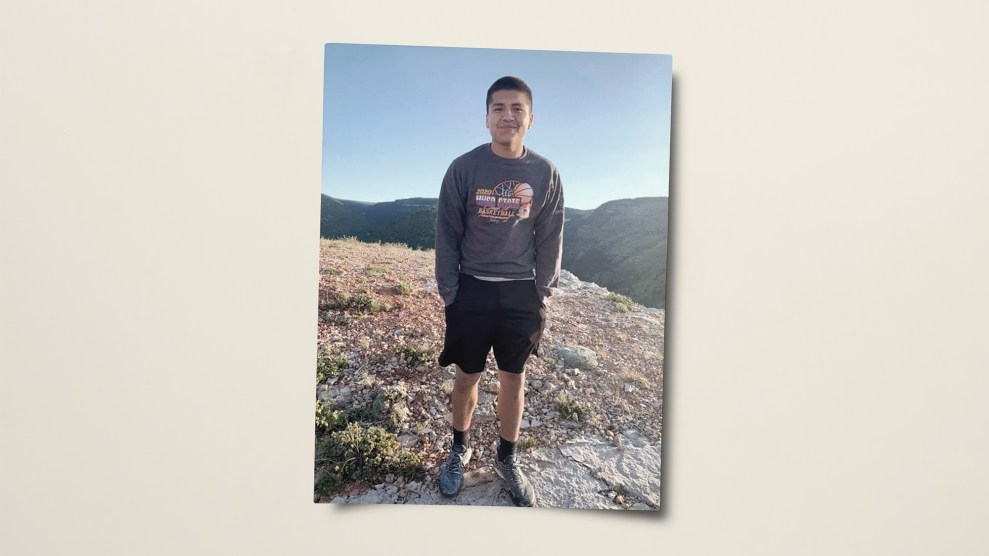

Past the guard, in a large room packed with workers manning PCs on long tables, I meet Michael Baybayan, an enthusiastic 21-year-old with a jaunty pouf of reddish-brown hair….Baybayan is part of a massive labor force that handles “content moderation”—the removal of offensive material—for US social-networking sites. As social media connects more people more intimately than ever before, companies have been confronted with the Grandma Problem: Now that grandparents routinely use services like Facebook to connect with their kids and grandkids, they are potentially exposed to the Internet’s panoply of jerks, racists, creeps, criminals, and bullies. They won’t continue to log on if they find their family photos sandwiched between a gruesome Russian highway accident and a hardcore porn video.

….So companies like Facebook and Twitter rely on an army of workers employed to soak up the worst of humanity in order to protect the rest of us. And there are legions of them—a vast, invisible pool of human labor. Hemanshu Nigam, the former chief security officer of MySpace who now runs online safety consultancy SSP Blue, estimates that the number of content moderators scrubbing the world’s social media sites, mobile apps, and cloud storage services runs to “well over 100,000”—that is, about twice the total head count of Google and nearly 14 times that of Facebook.

Given that content moderators might very well comprise as much as half the total workforce for social media sites, it’s worth pondering just what the long-term psychological toll of this work can be.

We often hear about how the new app economy is largely a jobless economy, but thanks to the general scumminess of human beings maybe that’s less true than we think. Cleaning up the internet for grandma is a grueling, never-ending job that, for now anyway, can only be done by other, less scummy, human beings. Lots of them.

It’s true that the “basic moderation” jobs are largely overseas and don’t pay much, but second-tier moderators are mostly US-based and are paid fairly well. As you’d expect, though, most don’t last long. Burnout comes pretty quickly when you spend all day exposed to a nonstop stream of torture videos, hate speech, YouTube beheadings, and the entire remaining panoply of general human degradation. That’s what the rest of Chen’s story is about. It’s a pretty interesting read.