Are you worried about the robots coming to take your job? You should be! But that’s still a ways away for most of us. In the meantime, the robots will be deciding which jobs we’re allowed to have. Today, the consistently fascinating Lydia DePillis points us to a new study that evaluates how well computer algorithms do at hiring new workers. The test bed is a large company with multiple locations. The workers perform relatively rote cognitive work that the authors can’t reveal, but it is “similar to jobs such as data entry work, standardized test grading, and call center work.”

In order to hire better workers, this company rolled out a new test that consists of “an online questionnaire comprising a large battery of questions, including those on technical skills, personality, cognitive skills, fit for the job, and various job  scenarios.” So how did stony-hearted Mr. Robot do?

scenarios.” So how did stony-hearted Mr. Robot do?

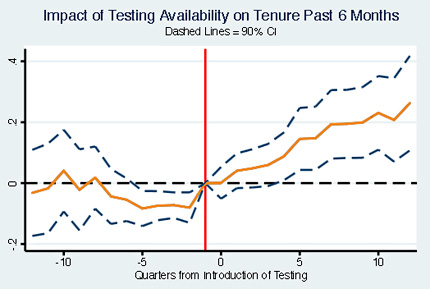

Better than humans, according to the authors. The test rates each applicant as green, yellow, or red, and they found that greens stayed on the job for 12 days longer than yellows, who in turn lasted 17 days longer than reds. This is significant since the average job tenure at this company is 99 days. More to the point, the authors find that more interference from hiring managers leads to worse results. “In our setting it provides the stark recommendation that firms would do better to remove discretion of the average HR manager and instead hire based solely on the test.”

But maybe hiring managers choose more productive workers? Nope. “In all cases, we find no evidence that managerial exceptions improve output per hour. Instead, we find noisy estimates indicating that worker quality appears to be lower on this dimension as well.”

Hmmph. I guess it’s HR managers who really need to be scared here. Apparently they simply add no value at all for jobs like this. Eventually, though, we’re going to start looking at whether these tests systematically discriminate against women or blacks or other protected classes. It would be pretty easy for this to happen either intentionally or unintentionally. Then the robots will either have to get smarter or else, ironically, find themselves out of a job.