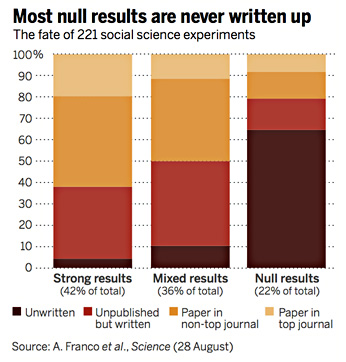

You all know about publication bias, don’t you? Sure you do. It’s the tendency to publish research that has bold, affirmative results and ignore research that concludes there’s nothing going on. This can happen two ways. First, it can be the researchers themselves who do it. In some cases that’s fine: the data just doesn’t amount to anything, so there’s nothing to write up. In other cases, it’s  less fine: the data contradicts previous results, so you decide not to write it up.

less fine: the data contradicts previous results, so you decide not to write it up.

The second way it can happen is via journal editors. Generally speaking, they prefer papers with exciting new results. This, of course, makes things even worse for researchers. They’re not much interested in writing up negative results in the first place, and they’re even less interested if they know there’s virtually no chance of getting them published in a good journal.

Why bring this up? Because yesterday Brian Resnick wrote about a truly remarkable case of publication bias. It revolves around research showing that the hormone oxytocin increases trust between humans. At first, multiple lines of research suggested it could. But then Anthony Lane, one of the primary researchers in the field, began getting negative results:

After 2010, fewer and fewer of their lab’s experiments yielded data that confirmed the oxytocin could reliably increase levels of trust….The doubts crested in 2014 when Lane and his colleagues couldn’t replicate their own envelope study….The lab was able to publish this negative finding, but Lane felt a larger problem was lurking. Labs are judged on the strength of their published work. And Lane’s published portfolio on oxytocin just wasn’t representative of their work anymore. They still had five papers showing promise for oxytocin, and only one casting doubt.

In a new paper published March in the journal Neuroendocrinology, Lane and his colleagues go through their “file drawer” of studies, and conclude the whole of their work yields an inconclusive result on the power of oxytocin spray to change behavior. They looked at 25 different tests their lab conducted. Only six of the 25 tests yielded significant results. In aggregate, the difference between the oxytocin sniffers in their studies and placebo groups “was not reliably different than zero,” the paper found.

When they tried to submit their null findings, they “were rejected time and time again,” the paper reports.

So there you go. Not just a boring “no result” study from an unknown researcher. Nor a trivial correction to previous work. This is a well-known researcher evaluating his entire output on a subject and concluding that he’s been wrong for years. And yet he couldn’t get it published.

Lots of null results don’t deserve to be published. But lots of them do: otherwise readers are left with an impression that the evidence is far more positive than it really is. That’s the case here: the overall impression Lane and others had left is that oxytocin works. It increases trust levels. Without a corrective, that’s what people would go on believing.

Everyone knows about this problem. Everyone agrees—in theory—that it needs to be addressed. And yet nothing ever seems to happen. Science deserves better.