Wren McDonald

Let’s do a little experiment. I’m going to tell you two stories, and at the end of this article, we’ll come back to them.

Here’s the first story: Last year, millions of children in the United States received the vaccine for measles, mumps, and rubella—and only a few had a serious reaction to it. Shortly after the injection, about 1 in 4,000 children developed a fever that caused a seizure. About 1 in 40,000 children experienced a blood disorder.

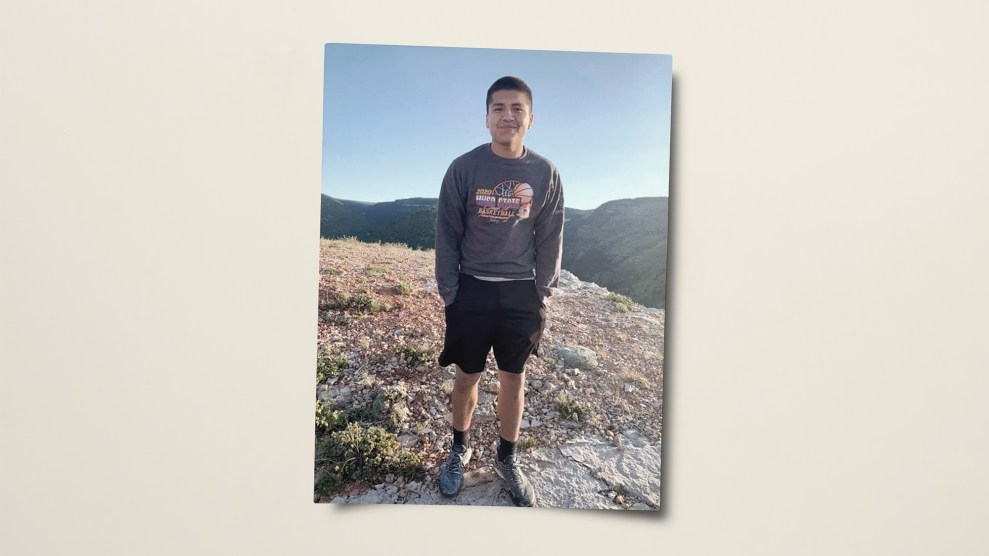

Here’s the second story: A baby I’ll call Jayden was developing normally until he received a set of routine vaccinations at 15 months. The next day, he awoke screaming and went into fits of rage. He began to engage in repetitive behaviors. By 18 months, he could no longer recognize his parents or siblings. He lost the words he had previously known, and over the next few months, he became violent. At age 2, Jayden was diagnosed with autism.

The first story comes from the Centers for Disease Control and Prevention and is based on meticulously collected data about vaccines’ safety and efficacy. Jayden’s story was one of hundreds of narratives in a Facebook group called Vaccine Injury Stories, where anti-vaccine advocates post anecdotes about friends or family members, mainly kids, who they believe were hurt by immunizations. Anyone can contribute a story, and no one verifies whether it’s true.

The first example is typical of how scientists communicate with us: Most major scientific bodies instruct scientists to stick to the facts when correcting misinformation. Yet a growing number of experts believe that facts alone can’t compete with the narrative techniques deployed by the purveyors of bunk. In her forthcoming book, Viral BS: Medical Myths and Why We Fall for Them, Seema Yasmin, a professor of primary care at Stanford University, argues that science communicators must harness the power of storytelling to beat the trolls at their own game. “Facts don’t really seem to be able to change people’s minds,” Yasmin says. “Stories can be much more powerful.”

There’s growing evidence that this approach can work. The battle against vaccine misinformation offers an instructive example. A 2015 study found that parents had a more favorable opinion of vaccines after looking at photos and hearing stories of children suffering from measles, for which safe and effective vaccines exist. The advocacy group Vaccinate Your Family posts videos of people telling emotional stories about losing a loved one to a vaccine-preventable illness. During a weeklong ad campaign on Hulu last summer, its videos racked up more than 297,000 completed views. “We are those geeks that pore over all the research about messaging about how to change minds,” says Amy Pisani, the group’s executive director. “We know that telling a personal story is paramount to doing that.”

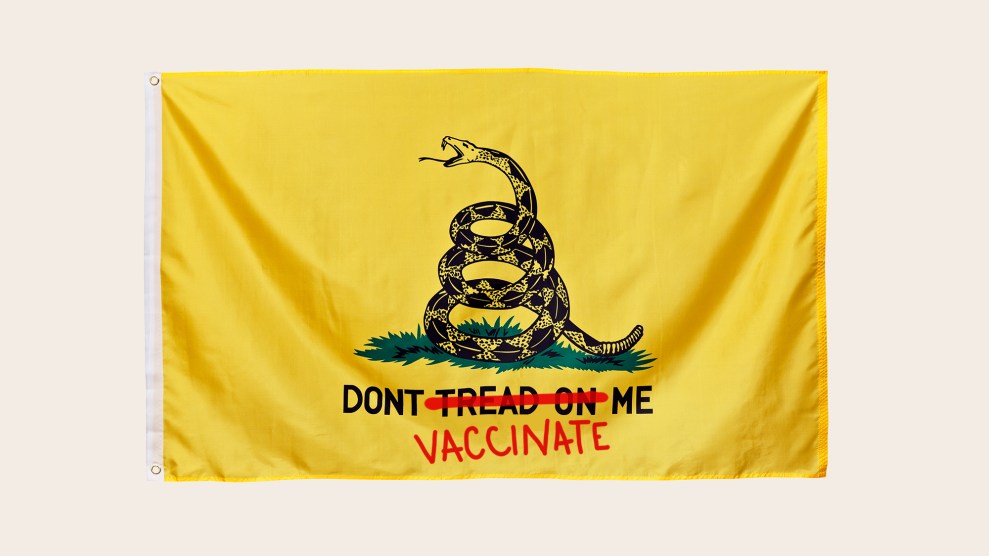

However, Yasmin cautions that emotional testimonials don’t always work. Research suggests that while they can be highly effective at swaying parents who are on the fence about vaccines, they can backfire on people who already strongly oppose vaccines, leading them to “double down” on their beliefs. For this reason, Yasmin says, scientists must get better at tailoring their messages to particular audiences. “What anti-science campaigners do really well is research the anxieties and concerns of different communities,” she says. Take coronavirus misinformation: In online parent communities, you’ll find emotional posts about the detrimental effects of school closures on kids’ mental health. In right-wing groups, members post memes about how mask mandates violate civil liberties. In religious communities, believers argue that masks are ungodly. Rumors quickly evolve and adapt, as anthropologist Heidi Larson, who runs a misinformation-fighting group called the Vaccine Confidence Project, recently explained to the New York Times: “They morph as the story goes on, and people repurpose a particular rumor to fit their situation.”

The easiest way to reach people may be to catch them before they make up their minds. If you tell them what kinds of misinformation to expect before they encounter it, they’re more likely to resist it. A group of University of Cambridge psychologists has developed a game, called Bad News, that encourages users to get into a troll’s mindset. When I played, I earned an award for picking a meme that exploited people’s emotions. (“They test anti-aircraft guns on innocent puppies!”)

The goal of the game is not to train people to be terrible online, but to “elicit a state where people are a bit more vigilant, a bit more critical,” says Melisa Basol, one of the researchers working on it. This global approach may be more effective than debunking individual pieces of misinformation. Psychologists have identified a phenomenon wherein scientific misinformation continues to influence our thinking even after we learn that it’s incorrect. Is antiperspirant linked to breast cancer? Is there arsenic in rice? (No and yes, respectively, in case your memory needed jogging.)

Which brings us back to our two stories. Do you recall the exact figures from the first story based on CDC data? Do you remember what happened to baby Jayden? For most of us, the feelings that the second story elicited are much more durable—whether the story is true or not. That phenomenon is well understood by those who spread fake news, and scientists must learn to use it—along with other techniques perfected by trolls—to their advantage. In this era when many Americans have rejected reality, Yasmin says, “Facts are not enough.”