I’ve always loved racing games. I first got addicted way back in the ’90s, when I’d jam quarters into arcade cabinets with the full-sized car seat and stick shift, and hurtle around virtual tracks. During the ’00s and early ’10s I became a narcotic partisan of the Burnout racing series on my PlayStation—games in which you drove at such a frantic pace that the landscape blurred. And what, precisely, was the allure of all this pell-mell driving? I think it activated some latent Walter Mitty mechanics in my soul. My day job is sufficiently sedate (I sit around talking with people and typing up what they say) that I needed some romantically intense escape: split-second decision-making at 200 mph.

Most importantly, though, racing games gave me a wonderful feeling of mastery. They’re hard, often brutally so. You have to learn the performance characteristics of a given car and experiment with how well it accelerates or drifts into a curve. You similarly have to learn (by merciless, crashing-and-tumbling error) the quirks of each track. Three minutes in, my dendrites are a gorgeous riot of electricity. You get a fantastic, incremental sense of progress: Each time around the track, I sharpen my skills. Eventually, I’m so good that I’m coming in first place, winning access to fresh tracks and new cars. It’s a delightful flywheel of exploration, learning, and improvement.

For more articles read aloud: download the Audm iPhone app.

Getting better at a difficult task: This feeds the soul. Harvard professor Teresa Amabile has long documented how “small wins”—the experience of constant, gradual progress—can be deeply fulfilling. This is a core reason why some video games are so richly satisfying, and also why, whenever events in my real life seemed uncontrollable or spinning into chaos, I could find temporary solace in a game. Whatever crap was breaking loose, wherever else I was screwing up, a racing game offered a soothingly linear relationship between toil and reward.

But that has all pretty much crashed and burned, thanks to the advent of what the tech industry calls “microtransactions.” These are the little moments inside a game when, if you realistically want to progress further, you have to buy something—with real money. A pastime that used to offer rewards for hard work, grit, and perseverance has turned into a matter of who is willing to whip out a credit card and spend their way to success.

This transformation is unsettlingly redolent of how our broader economic zeitgeist has evolved into a pay-to-win scheme: People with money to burn can buy their way up the ladder, hard work be damned. It seems hypercapitalism, having already wreaked havoc on the real world, has come for the world of play.

The first time I recall stumbling across microtransactions was during the early 2010s. I’d been playing the Asphalt racing games for mobile phones. They were enormously fun—precisely the breakneck speeds and twisty, gnarly tracks that I love. The first few games were issued under the industry’s so-called premium model: You paid a modest amount—$20 or less—to download the game, which was then yours to play until the sun burned out. The gameplay was delicious. The better I got, the more often I came in first, earning myself piles of “currency” redeemable for new cars and new tracks.

Then, in 2012, Asphalt embraced microtransactions. The game price was slashed to 99 cents—and later zero. In this new iteration, as usual, I would gradually master a particular car and nail the winner’s slot, gaining enough in-game currency to score new vehicles, etc. But then, suddenly, my progress slowed and the winnings grew more meager. I found I had to re-race a track over and over to win enough gems and currency to keep going. It was frustrating, and just as my frustration was beginning to boil over and I was ready to toss the game aside, a message would pop up, like: Hey! If you want, you could buy a big pot of coins for $2.99.

So I paid. I wanted those cars and tracks! But the transaction left a sour taste in my mouth. I wasn’t playing the game anymore—it was playing me, sucking me in with a supposedly “free” driving experience and then applying the brakes just to shake me down. Worse, Asphalt kept ramping up the aggravation level—too often I would need another few bucks worth of stuff to proceed. Sure, technically I could grind away and eventually reach my destination without paying, but it’d take much longer. Game developer Roger Dickey, formerly of Zynga, which created the Facebook hit Farmville, calls this “fun pain”—when an otherwise enjoyable game contains just enough irritation that you’ll pay to get rid of it.

By the late 2010s, microtransactions had metastasized. They were particularly common in free mobile games, but they’d even begun to show up in pricier games for home consoles like the Xbox. You’d shell out your $60 for the privilege of playing, but the games demanded more. Some of the upgrades they offered were purely cosmetic, like a fresh suit of armor in Assassin’s Creed.

These were less annoying than purchases that gave players an advantage—although in multiplayer games the right outfit can actually help you recruit experienced partners, conferring greater success. (A newbie skin is cringe.) But some expensive games sold powerups, too. You might spend weeks slowly grinding on quests to bring your warrior up to a powerful level 60 in World of Warcraft—or just buy a level-60 character for $60. Grimmer yet was the explosion of so-called loot boxes: You could purchase boxes containing random upgrades that were typically mediocre but occasionally super valuable—the gaming equivalent of buying a lottery scratcher ticket.

Why, exactly, did developers introduce these ghastly mechanics? The charitable view is that the economics of game publishing is quite brutal. Convincing someone to shell out $60, or even $20, for a game is hard—on mobile it’s near impossible. So the companies, particularly in the mobile realm, decided to make money instead by nudging players to buy in-game items week after week and month after month.

The problem for many players—including yours truly—is that microtransactions shatter the notion of fair play. After all, the idea that every player who hits “start” gets an equal shake is precisely what made video games so pleasingly different from real life. In meatspace reality, our paths are littered with inequity and random bad luck. Employees know there’s often zero relationship between how diligently they beaver away and how much they get paid. Devoted longtime workers are hurled into the street when their CEO decides it’s time to “restructure.” A senior female worker toils for years, only to see that glad-handing younger dude—the one she trained!—get promoted as her supervisor because he’s such a promising kid. (Oh, and his dad golfs with the chairman.)

“A big reason why people enjoy video games is to essentially be able to step out of reality,” says Ellen Evers, a longtime gamer who—as professor of marketing at UC Berkeley’s Haas School of Business—has studied games. “Then all of a sudden, your income in the real world starts affecting this game world. And that just makes it feel unfair to people, right?”

Evers, a fan of World of Warcraft, would spend months gradually “leveling up” her characters. So when someone would show up with a powerful character they’d clearly purchased, she felt demoralized—it was like witnessing the feckless children of wealthy donors swagger into Harvard. Worse, many would turn out to be lousy players, Evers says, because they hadn’t put in the long hours it normally takes to level up a character from 1 to 60 by slowly and painfully mastering the intricacies of play. Try partnering with one of these pay-to-play gamers on a raid and you’re likely to get your collective asses handed to you, because they’re not just wealthy, they’re incompetent.

One can blow a nontrivial amount of money within these supposedly free mobile games. I’ve seen posts by Asphalt players who say they’ve dropped more than $100—one player calculated that if you refused to buy anything at all, it’d take you more than six years to acquire all the cars. “We always strive to improve the sensitive balance between monetization and game progression,” Gameloft, Asphalt’s creator, noted in an email, “and we are always considering feedback from our players on that matter.”

The online action game Diablo Immortal, released last year, was so “monetized out the ass,” as the gaming streamer Luke Stephens put it to me, that “to get the final end-game stuff would take you like five years of grinding, maybe probably more—or you could just spend money.” Another player—a well-heeled one—set out to demonstrate how deeply commerce was embedded in the Diablo Immortal experience. He claimed he bought everything the game offered—and it cost him $100,000.

His stunt felt parodic, but only slightly. Some players really do spend thousands of dollars inside these games. And it turns out that the entire ecosystem of microtransactions revolves around a few big spenders—the same way casinos rely on a small number of high-rolling “whales” to generate most of their profits. One 2015 study found that less than 0.5 percent of gamers accounted for two-thirds of the microtransaction money. The majority just tough it out, slogging through a game designed to be frustrating if you don’t deploy your Mastercard.

And even if you don’t blow hundreds, it’s easy to get lulled into spending quite a bit more than you’d expected to. Those loot boxes I mentioned earlier play on exactly the same psychology as slot machines in casinos do—intermittent reinforcement—wherein a random distribution of rewards encourages an addictive cycle. (I’ll just get one more loot box; maybe this will be the one with the epic powerup.) Indeed, Belgium and the Netherlands have banned loot boxes, which they regard as a form of gambling.

For further evidence of how depressing in-game transactions can be, consider that one of their great defenders is Sen. Ted Cruz. On a podcast last summer, he described how much he enjoys purchasing his success. “You can buy in-game items and make your character stronger or get advantages,” Cruz noted. “I’ll confess, I’ll sometimes buy it because it’s a lot more fun if suddenly your character has a lot of great stuff that would take you 6 to 12 months to build up.”

Ted Cruz aside, there are defenses of microtransactions worth considering. Some scholars of video games, for example, would characterize my lament—that the pay-to-succeed model spoils gaming’s meritocratic ethos—as a smug little illusion. Before smartphones came along, you could only play home video games if you or your parents owned a console or computer, and not every family could afford such luxuries. Then there was the cultural gatekeeping, video games being a boys’ club—some still are. “It’s not a meritocratic utopia at all,” says Daniel Nielsen, a doctoral candidate at Charles University in Prague who has studied microtransactions. My feelings are pretty common, he adds, but they’re still basically privileged.

He’s got a point. But even so, I’d posit that games are a good deal fairer than many of our real-world social constructs—education, homeownership, and stock market participation, to name a few. Before video games, board and card games were regarded as arenas of skill and devotion. Try adding microtransactions to your next game of chess—“pay me $15 or you’ll lose a turn”—and see how that goes.

A more interesting defense is the argument that microtransactions are economically beneficial for gamers. Pay-to-succeed has enabled developers to release thousands of free games that people with little to no discretionary cash then get to play. Yes, those games may quickly become annoying—even unplayable—if you don’t buy stuff. But as Stephens points out, people tolerate the trade-off because it lets them sample games for free. In a sense, then, microtransactions resemble a weird sort of progressive tax regime: The many enjoy limited access to a huge commons subsidized by the spending of the few—who get to enjoy all the perks. That’s not a healthy economy, but it is an economy.

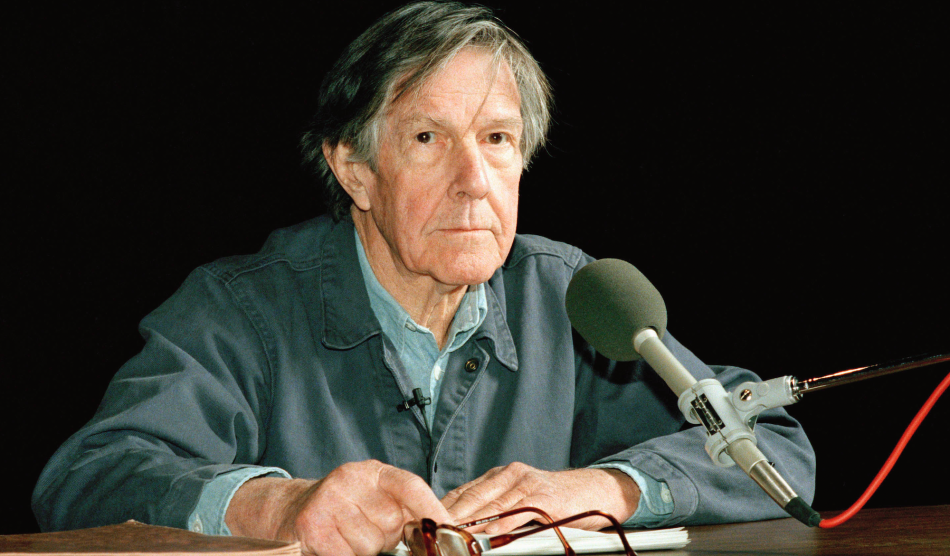

Sometimes, though, I wonder if microtransactions are a bleak harbinger of where late capitalism is headed. An online video game is a panopticon so pure that it would thrill any surveillance capitalist. Gaming companies track my scores and stats, how much money I spend in the game, and which internet service provider I use. It is not surprising, with so much personal data, that they can fine-tune the design of their games to make in-game purchases optimally seductive, thus maximally lucrative. They have nigh-perfect info on what level of “fun pain” is needed to get gamers to open their wallets.

It wouldn’t be surprising if our real lives go this way, too. More and more of our behavior is being tracked—by glitzier phones and apps that monitor our body movements, whereabouts, and exercise routes; blood-oxygen sensors in wristwatches; and voice assistants that parse the emotional tone of your voice. Silicon Valley honchos are at this moment busy building the next wave of technologies to help them track us even more insistently. Mark Zuckerberg is spending billions to coax us into his metaverse. Elon Musk’s Neuralink is building a way to merge our brains with computers. Not so far into the future we may find our everyday lives studded with dystopic little paywalls, each neatly tuned to our personal tolerance for aggravation.

Maybe we’re halfway there already. Mia Consalvo—a longtime video game scholar at Concordia University—notes that the wider marketplace contains plenty of what could pass as microtransactions. You choose your flight, but oh! Did you also want to select your seat or check a bag? (Better buy this upgrade.) You’ve got a phone plan, but hey, the data speed is kind of sluggish—pay to make it faster? In our modern world, games mimic life and life grows ever more gamelike. You can never win—and there’s no way to stop playing.