Bill Clark-Pool/Getty Images

In 2016, Facebook was caught flatfooted. Throughout that year’s election, Americans using the platform confronted a range of misinformation as Russian state-sponsored trolls, Eastern European teenagers, and others manipulated the company’s weak moderation practices. Since then, the company has claimed that it takes disinformation seriously. But a new report from Avaaz, a global nonprofit activist group, suggests that Facebook still has a lot of work to do to meaningfully tamp misinformation.

While Facebook appears to have made significant strides in stopping foreign governments from using its platform to interfere in U.S. elections, Avaaz’s analysis suggests the company is having a hard time replicating that success when it comes to disinformation designed inside the United States.

Avaaz found that Facebook could have stopped an estimated 10 billion views on top-performing pages that repeatedly shared misinformation in the eight months leading up to the 2020 elections. The lack of moderation enforcement on such pages—which Avaaz described as having shared “false or misleading information with the potential to cause public harm” by undermining democracy, public health, or encouraging discrimination—allowed them to flourish. The report finds the pages nearly tripled their monthly interactions from 97 million in October 2019 to 277.9 million a year later.

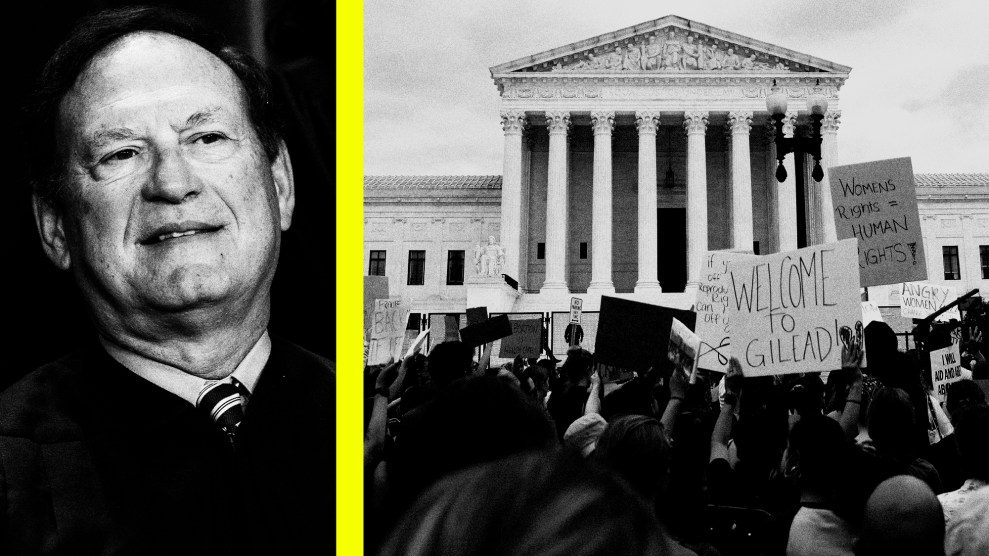

Pages backing the QAnon and Stop The Steal movements—two groups with violent histories that Facebook has repeatedly committed to excise from its platform—specifically benefited from this growth. Avaaz found 267 groups with a combined following of 32 million that spread content championing violence through the 2020 election. Sixty-nine percent of these groups had Boogaloo, QAnon, or militia-themed names and shared related content. Facebook has also promised to crack down on both militias and Boogaloos, an extremist group that is pushing for a second civil war.

Facebook spokesperson Andy Stone disputed Avaaz’s findings, claiming that the “report distorts the serious work we’ve been doing to fight violent extremism and misinformation on our platform” and accusing the researchers of using “a flawed methodology to make people think that just because a Page shares a piece of fact-checked content, all the content on that Page is problematic.”

At the time Avaaz completed its report, the organization found that 118 of the 267 groups were still active and continued promoting violence to a combined following of nearly 27 million. Following Avaaz’s work, Facebook reviewed these groups and pages and removed 18 more, claiming it had already removed 4 of the groups prior to Avaaz’s report.

The report also documents how misinformation about the 2020 election circulated on Facebook long before voting closed, results were known, and the conspiracy theories about a stolen election took off. By October 7, Avaaz had documented 50 posts making misleading election fraud claims in key swing states. The posts had 1.5 million interactions, and reached an estimated 24 million viewers.

While Facebook might label misinformation coming from an account that first generated it, the company’s artificial-intelligence powered tools frequently fail to detect accounts copying the misinformation. Ahead of the election, Avaaz found that copycat accounts avoided fact-checking labels applied to the original posts and continued to spread debunked information. Such posts reached an estimated 124 million people.

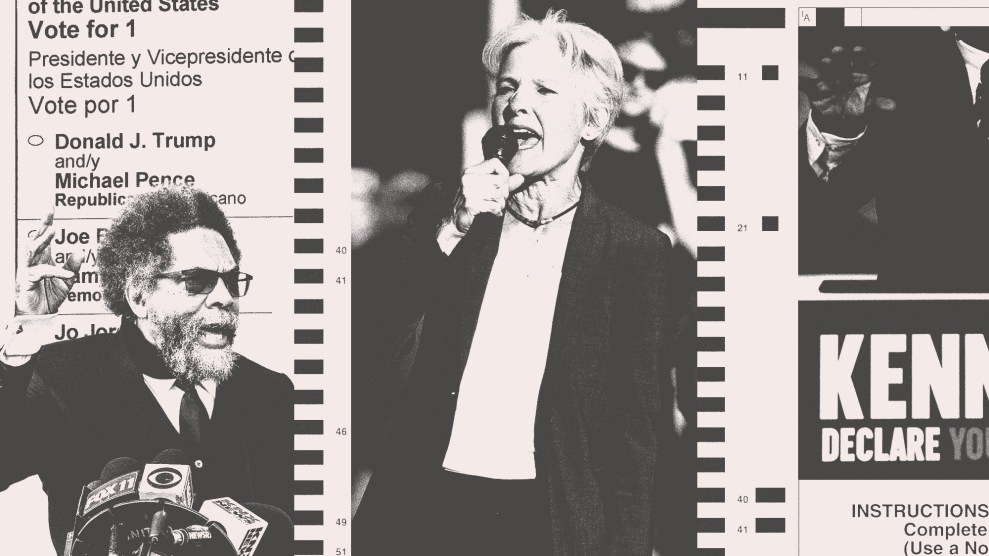

These problems persisted after the November elections. In advance of Georgia’s runoff Senate elections on January 5, Avaaz found that Facebook failed to label spreaders of misinformation, some of whom garnered more interactions than traditional news sites covering the race. “The top 20 Facebook and Instagram accounts we found spreading false claims that were aimed at swaying voters in Georgia accounted for more interactions than mainstream media outlets,” the report states.

Avaaz noted that Facebook’s failings on election misinformation go beyond AI detection problems. The company’s own policies also allowed misinformation to spread. In Georgia, for example, the group noted that political ads on the platform spread information that had been debunked by independent fact-checkers. But because Facebook policy expressly allows politicians to lie in political ads, the candidates were allowed to spread this misinformation to millions of voters.

To counter viral misinformation, Avaaz has several recommendations for Facebook. Among them, the group urges the company to stem the influence of accounts spreading disinformation well before an election and not wait to downrank them until just weeks before, as it did in 2020. The report also suggests Facebook take more steps to proactively show corrections to users exposed to misinformation and improve its AI detection systems, which failed to keep a significant amount of false content off the platform in 2020.

Avaaz also has ideas for how Washington should begin to regulate social media platforms. The report’s first recommendation on that front—and the one that would pack the most punch at Facebook—is to create a legal framework that would force the company to alter its algorithms that push disinformation, hateful, and toxic content to the top of users’ news feeds. Critics have long pointed out that Facebook’s algorithm prefers sensational content that drives engagement, even if it is otherwise harmful—and that Facebook has been resistant to change that because it would directly impact the company’s growth and revenue.

In other recommendations for the federal government, Avaaz suggests mandating public reports from social media companies that would disclose details about their algorithms and their struggles against misinformation. They also suggest that the existing Section 230 of the Communications Act, which provides platforms liability protection for user-created content they help spread, somehow be rewritten to allow regulation of misinformation. Another envisioned reform, which Avaaz says would cut belief in false information by half, would be to require platforms to create retroactive correction tools that would alert users they were exposed to misinformation even if it hadn’t been determined to be false at that time.

On Thursday, Facebook CEO Mark Zuckerberg is set to testify alongside other tech CEOs at a Congressional hearing on social media and disinformation. “The most worrying finding in our analysis is that Facebook had the tools and capacity to better protect voters from being targets of this content, but the platform only used them at the very last moment, after significant harm was done,” said Fadi Quran, a campaign director at Avaaz, in a statement. “How many more times will lawmakers have to hear [Zuckerberg] say ‘I’m sorry’ before they decide to protect Americans from the harms caused by his company?”

This post has been updated with comment from Facebook.