<a href="http://www.shutterstock.com/gallery-2059538p1.html?cr=00&pl=edit-00">JaysonPhotography</a>/Shutterstock

Facebook users and privacy advocates erupted in anger recently after New Scientist drew attention to a 2012 study in which Facebook researchers had attempted to manipulate users’ moods. “The company purposefully messed with people’s minds,” one privacy group complained to the Federal Trade Commission.

But the mood study is far from the only example of Facebook scrutinizing its users—the company has been doing that for years, examining users’ ethnicities, political views, romantic partners, and even how they talk to their children. (Unlike the mood study, the Facebook studies listed below are observational; they don’t attempt to change users’ behavior.) Although it’s unlikely Facebook users have heard about most of these studies, they’ve consented to them; the social network’s Data Use Policy states: “We may use the information we receive about you…for internal operations, including troubleshooting, data analysis, testing, research and service improvement.”

Below are five things Facebook researchers have been studying about Facebook users in recent years. (Note that in each of these studies, data was analyzed in aggregate and steps were taken to hide personally identifiable information.)

1. Your significant others (and whether the relationship will last): In October 2013, Facebook published a study in which researchers tried to guess who users were in a relationship with by looking at the users’ Facebook friends. For the study, Facebook researchers randomly chose 1.3 million users who had between 50 and 2,000 friends, were older than 20, and described themselves as married, engaged, or in a relationship. To guess whom these users were dating, the researchers analyzed which of the users’ friends knew each other—and which ones didn’t. You might share a ton of college friends with your old college roommate on Facebook, for example. But your boyfriend might be Facebook friends with your college friends, your coworkers, and your mom—people who definitely don’t know each other. Hence, he’s special.

Using this method, researchers were able to determine a person’s romantic partner with “high accuracy”—they were able to guess married users’ spouses 60 percent of the time by just looking at users’ friend networks. The researchers also looked at a subset of same-sex couples, to see whether that changed the results. (It didn’t.)

Facebook then decided to see whether it could use this method to predict whether a relationship is likely to last. For this part of the experiment, researchers looked at about 400,000 users who said that they were “in a relationship” and watched to see whether those users said they were single 60 days later. The researchers concluded that relationships in which Facebook’s model correctly identified the partner were less likely to break up, noting that the results were especially accurate when the two people had been together less than a year. (So basically, if you’re only introducing your boyfriend to your friends, and not your mom, your relationship might be less likely to last.)

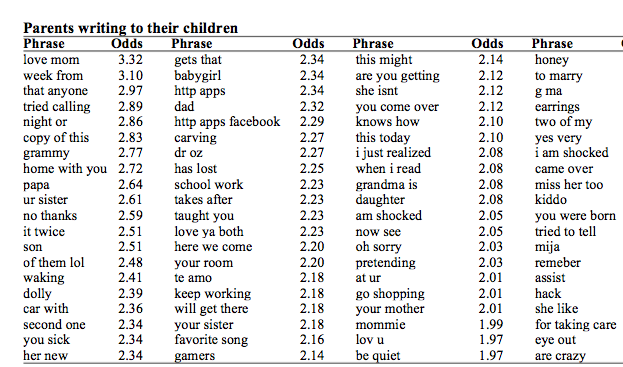

2. How your mom talks to you: For this study, Facebook looked at how parents and their kids talk to each other Facebook. (Fun fact: On average, parent-child pairs wait 371 days after joining Facebook before becoming “friends.” Tell your little sister to stop ignoring your mom’s friend request.) The researchers examined three months of communication data pulled from September 2012. This data included comments, posts, and links shared on other users’ timelines, but not chat messages. According to the researchers, that wasn’t a privacy decision—chats are simply “too short and noisy for substantive language analysis.” Here are some of the top phrases that researchers noticed parents using in messages to their young children:

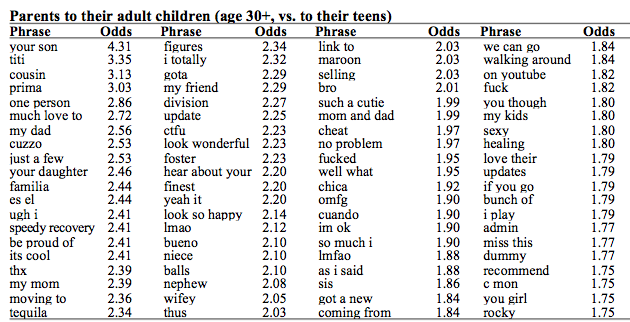

And here’s what parents are writing to their adult children, after they’ve developed filthy minds and drinking problems:

Facebook also noted that “what parents say when they’re not talking to their children is just as revealing; they use higher levels of ideology (agree but, obama, our government, policies, people need to, ethics), swearing and slang (ctfu, lmao, fucker, idk), and alcohol and sex terms (tequila, glass of wine, that ass, sexy). Ew.

3. Your ethnicity: In this older study, from 2010, researchers wrote that “the ethnicity of a user base is an important demographic indicator that can be used for marketing, compliance, and analytics as well as a scientific tool for understanding social behavior,” but lamented that “unfortunately, ethnic information is often unavailable for practical, legal, or political reasons.” So researchers came up with a solution: They determined the ethnic breakdown of US Facebook users by using people’s names and data provided by the Census. Tested on Facebook, the researchers’ proposed model “learned” that Latoya is more likely to be a black name and Barb is more likely to be white name. “Using both first and last names further improves estimates, largely by making better distinctions between White and Black,” the researchers wrote.

Once researchers had that data set, they started doing other studies. For example, the researchers examined pairs of people in romantic relationships on Facebook, as broken down by ethnicity. They also noted that their research suggested that “individuals’ ethnicity can be predicted through their social ties” and tried to predict users’ ethnicity based on the average ethnicity of their friends. (You should definitely not play this game at your next dinner party.) The researchers also compared users’ self-identified political views with their ethnicities, noting that “whites are more frequent in the Libertarian, Conservative, and Very Conservative categories.” The researchers did note that their research method comes with a caveat, “While ethnicity is an important factor in understanding user behavior, it is often only a proxy for other variables, such as socioeconomic status, or education. A complete analysis should control for all such factors.”

4. How you respond to conspiracy theories: In the spring of 2014, Facebook published a study on how rumors spread on the social network. The researchers looked at rumors identified by the rumor-debunking website Snopes.com that fall into a number of different categories, including politics, medicine, horror, “glurge” (i.e., sentimental stories that usually aren’t true), and 9/11. Then, the researchers found rumors posted on Facebook as photos, and gathered 249,035 comments in which people commented on the rumor with a valid link to Snopes. Ultimately, the researchers found reshared posts that received a comment that linked to Snopes were more likely to be deleted. So, feel free to keep telling your friends that the Russian sleep experiment story is BS.

5. If you’re deleting posts before you publish them: For this 2013 study, Facebook looked at how often users start typing a post or comment, and then at the last minute, decide not to publish it, which they called “self-censorship.” The researchers collected data from 3.9 million users over 17 days. They noted when someone started typing more than five characters in status update or comment box. The researchers recorded only whether text was entered, not the keystrokes or content. (This is the same way Gmail automatically saves drafts of your email, except that Facebook logs the presence of text, not actual content.) If the user didn’t share the post within 10 minutes, it was marked as self-censored. Researchers found that 71 percent of all users censored content at least once. The researchers also noted that women were less likely to self-censor, as were people with a more politically diverse set of friends.