In June 2012, a Google supercomputer made an artificial-intelligence breakthrough: It learned that the internet loves cats. But here’s the remarkable part: It had never been told what a cat looks like. Researchers working on the Google Brain project in the company’s X lab fed 10 million random, unlabeled images from YouTube into their massive network and instructed it to recognize the basic elements of a picture and how they fit together. Left to their own devices, the Brain’s 16,000 central processing units noticed that a lot of the images shared similar characteristics that it eventually recognized as a “cat.” While the Brain’s self-taught knack for kitty spotting was nowhere as good as a human’s, it was nonetheless a major advance in the exploding field of deep learning.

The dream of a machine that can think and learn like a person has long been the holy grail of computer scientists, sci-fi fans, and futurists alike. Deep learning—algorithms inspired by the human brain and its ability to soak up massive amounts of information and make complex predictions—might be the closest thing yet. Right now, the technology is in its infancy: Much like a baby, the Google Brain taught itself how to recognize cats, but it’s got a long way to go before it can figure out that you’re sad because your tabby died. But it’s just a matter of time. Its potential to revolutionize everything from social networking to surveillance has sent tech companies and defense and intelligence agencies on a deep-learning spending spree.

What really puts deep learning on the cutting edge of artificial intelligence (AI) is that its algorithms can analyze things like human behavior and then make sophisticated predictions. What if a social-networking site could figure out what you’re wearing from your photos and then suggest a new dress? What if your insurance company could diagnose you as diabetic without consulting your doctor? What if a security camera could tell if the person next to you on the subway is carrying a bomb?

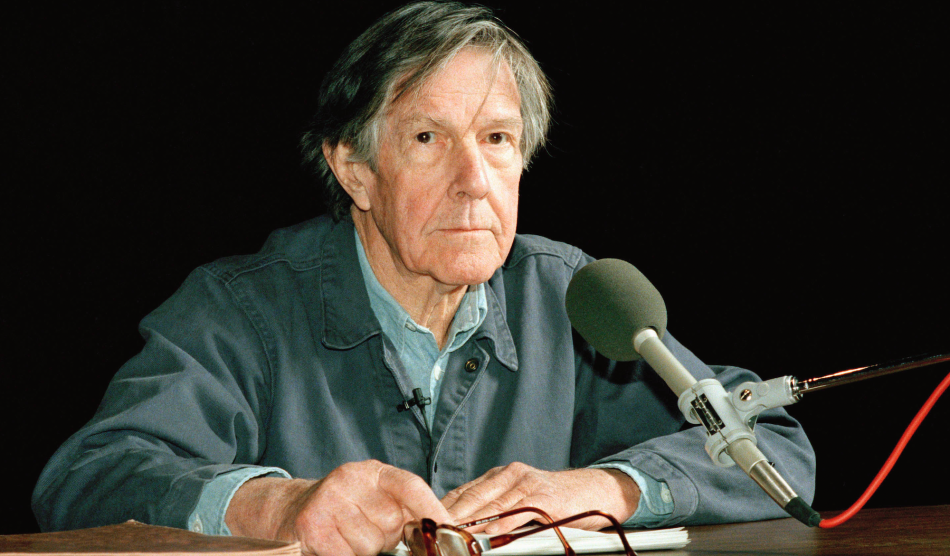

And unlike older data-crunching models, deep learning doesn’t slow down as you cram in more info. Just the opposite—it gets even smarter. “Deep learning works better and better as you feed it more data,” explains Andrew Ng, who oversaw the cat experiment as the founder of Google’s deep-learning team. (Ng has since joined the Chinese tech giant Baidu as the head of its Silicon Valley AI team.)

And so the race to build a better virtual brain is on. Microsoft plans to challenge the Google Brain with its own system called Adam. Wired reported that Apple is applying deep learning to build a “neural-net-boosted Siri.” Netflix hopes the technology will improve its movie recommendations. Google, Yahoo, Twitter, and Pinterest have snapped up deep-learning companies; Google has used the technology to read every house number in France in less than an hour. “There’s a big rush because we think there’s going to be a bit of a quantum leap,” says Yann LeCun, a deep-learning pioneer and the head of Facebook’s new AI lab.

Last December, Facebook CEO Mark Zuckerberg appeared, bodyguards in tow, at the Neural Information Processing Systems conference in Lake Tahoe, where insiders discussed how to make computers learn like humans. He has said that his company seeks to “use new approaches in AI to help make sense of all the content that people share.” Facebook researchers have used deep learning to identify individual faces from a giant database called “Labeled Faces in the Wild” with more than 97 percent accuracy. Another project, dubbed PANDA (Pose Aligned Networks for Deep Attribute Modeling), can accurately discern gender, hairstyles, clothing styles, and facial expressions from photos. LeCun says that these types of tools could improve the site’s ability to tag photos, target ads, and determine how people will react to content.

Yet considering recent news that Facebook secretly studied 700,000 users’ emotions by tweaking their feeds or that the National Security Agency harvests 55,000 facial images a day, it’s not hard to imagine how these attempts to better “know” you might veer into creepier territory.

Not surprisingly, deep learning’s potential for analyzing human faces, emotions, and behavior has attracted the attention of national-security types. The Defense Advanced Research Projects Agency has worked with researchers at New York University on a deep-learning program that sought, according to a spokesman, “to distinguish human forms from other objects in battlefield or other military environments.”

Chris Bregler, an NYU computer science professor, is working with the Defense Department to enable surveillance cameras to detect suspicious activity from body language, gestures, and even cultural cues. (Bregler, who grew up near Heidelberg, compares it to his ability to spot German tourists in Manhattan.) His prototype can also determine whether someone is carrying a concealed weapon; in theory, it could analyze a woman’s gait to reveal she is hiding explosives by pretending to be pregnant. He’s also working on an unnamed project funded by “an intelligence agency”—he’s not permitted to say more than that.

And the NSA is sponsoring deep-learning research on language recognition at Johns Hopkins University. Asked whether the agency seeks to use deep learning to track or identify humans, spokeswoman Vanee’ Vines only says that the agency “has a broad interest in deriving knowledge from data.”

Deep learning also has the potential to revolutionize Big Data-driven industries like banking and insurance. Graham Taylor, an assistant professor at the University of Guelph in Ontario, has applied deep-learning models to look beyond credit scores to determine customers’ future value to companies. He acknowledges that these types of applications could upend the way businesses treat their customers: “What if a restaurant was able to predict the amount of your bill, or the probability of you ever returning? What if that affected your wait time? I think there will be many surprises as predictive models become more pervasive.”

Privacy experts worry that deep learning could also be used in industries like banking and insurance to discriminate or effectively redline consumers for certain behaviors. Sergey Feldman, a consultant and data scientist with the brand personalization company RichRelevance, imagines a “deep-learning nightmare scenario” in which insurance companies buy your personal information from data brokers and then infer with near-total accuracy that, say, you’re an overweight smoker in the early stages of heart disease. Your monthly premium might suddenly double, and you wouldn’t know why. This would be illegal, but, Feldman says, “don’t expect Congress to protect you against all possible data invasions.”

And what if the computer is wrong? If a deep-learning program predicts that you’re a fraud risk and blacklists you, “there’s no way to contest that determination,” says Chris Calabrese, legislative counsel for privacy issues at the American Civil Liberties Union.

Bregler agrees that there might be privacy issues associated with deep learning, but notes that he tries to mitigate those concerns by consulting with a privacy advocate. Google has reportedly established an ethics committee to address AI issues; a spokesman says its deep-learning research is not primarily about analyzing personal or user-specific data—for now. While LeCun says that Facebook eventually could analyze users’ data to inform targeted advertising, he insists the company won’t share personally identifiable data with advertisers.

“The problem of privacy invasion through computers did not suddenly appear because of AI or deep learning. It’s been around for a long time,” LeCun says. “Deep learning doesn’t change the equation in that sense, it just makes it more immediate.” Big companies like Facebook “thrive on the trust users have in them,” so consumers shouldn’t worry about their personal data being fed into virtual brains. Yet, as he notes, “in the wrong hands, deep learning is just like any new technology.”

Deep learning, which also has been used to model everything from drug side effects to energy demand, could “make our lives much easier,” says Yoshua Bengio, head of the Machine Learning Laboratory at the University of Montreal. For now, it’s still relatively difficult for companies and governments to efficiently sift through all our emails, texts, and photos. But deep learning, he warns, “gives a lot of power to these organizations.”