gremlin/iStock

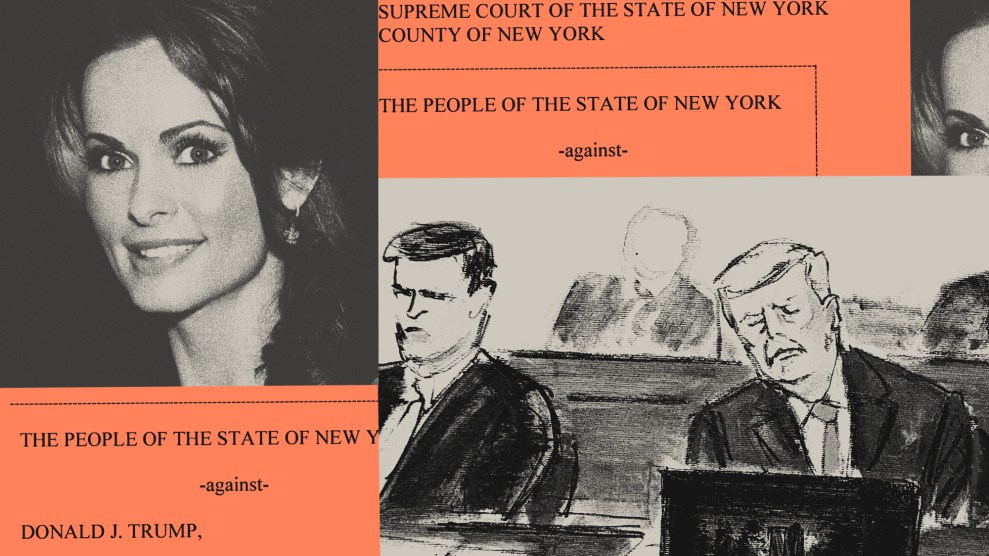

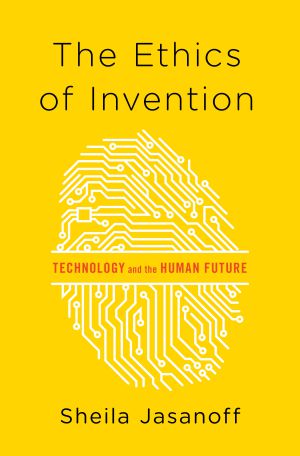

In a world where personal information is ubiquitous and accessible, shouldn’t you have the right to be forgotten? How should we deal with traces of our online selves? These are just two of many questions and issues explored in Sheila Jasanoff’s new book, The Ethics of Invention, which published this week. Jasanoff, a professor of science and technology studies at the Harvard Kennedy School of Government, explores ethical issues that have been created by technological advances—from how we should deal with large-scale disasters such as Bhopal or Chernobyl to the more hidden conundrums of data collection, privacy, and our relationship with tech giants like Facebook and Google.

Jasanoff believes we don’t sufficiently acknowledge how much power we’ve handed over to technology, which, she writes, “rules us as much as laws do.” What we need, she says, is far more reflection on the role that tech plays in our lives now and what role we want it to play in the future. One of her hopes in writing this book was to explore the “need to strengthen our deliberative institutions to rethink things,” so that “people will recognize that this is a democratic obligation every bit as much as elections.” She sees that putting “a technology into place [is] like putting a political leader into place, and we should take political responsibility for the consequences of technology in the same way that we at least try to take political responsibility for who we elect.”

I talked to Jasanoff about the different ways we should approach tech, whether we can rightly predict what will happen in the future, and if there’s hope for us before we all become controlled by robots or a version of the rolling, chubby humans in Wall-E.

Mother Jones: You start your book by discussing a lot of the different ways people have approached and thought about technology, including theories around determinism. Why do you reject those ideas and say we have to recognize our human influence and values in technology and our agency over it?

Sheila Jasanoff: Well, first of all, I’m delighted to see that you’ve picked up one of the major messages of the book so clearly. The question why it’s important for us to come to grips with our own agency and our involvement in putting science and technology into our lives—it’s in a way obvious, because otherwise we take something that we human beings have created and raise it to a pedestal where we think that the technology determines for itself how humans’ future ought to evolve. I think that’s a risky proposition for many, many reasons. First of all, it makes us less careful about the harmful dimensions of technological design, which undoubtedly exist. But secondly, it also makes us less sensitive to the inequalities involved in allowing technologies to develop in particular ways, and this is something I think is really important to put front and center as we become more and more dependent on technology in every dimension of our lives.

MJ: Can you elaborate a little more on the inequalities that you just mentioned?

SJ: Let me begin with the best-known historical example, because that is tied up with these myths that need to be revisited and rethought: the idea of the Luddite. The Luddites were a group of people who have become proverbial as anti-technology because these were British weavers who, in the early days in the introduction of the mechanical loom, went about and broke the looms.

Historically, the idea that you take something novel and you break it has been seen as the ultimate rejection of Enlightenment values, of progress, of civilization—because how could you possibly move forward if you break technology? I think that that misses the point, that if you introduce any kind of technology, what you’re introducing is a new way of living and the consequences of that new way of living for people who were enmeshed in a different way of living need to be thought through.

So I think there’s a direct comparison between that and today’s GMO protests. This is the area in contemporary science and technology where there’s been the most concentrated protests, and one type of protest has been to go to field sites, where GMOs of all sorts have been experimentally planted, and local farmers and activists have gone there and ripped up these plants. And again, scientists and many policymakers see this as a form of Luddism. But that keeps us from recognizing that farming is a very complicated way of living…and by introducing a different kind of more industrialized, more standardized agriculture, we’re actually interfering with long-standing patterns. This may be better or this may be for the worse, but that is something that we need to debate. But to not debate it, to just decide that just because it is a technological new thing, therefore it has to be propagated as fast as possible, as widely as possible, that seems to be a mistake, in ethical terms and in terms of democratic politics as well.

MJ: It’s interesting that you bring up the GMO protests and the resistance to new technologies, because it seems as if some technologies are more acceptable than others. We seem to be more okay with mobile phones but we’re resistant to other things. Why are some technologies and inventions more accepted than others?

SJ: In general, people feel that technologies they perceive as liberating are easier to accept than ones that they feel are imposing controls on them, so something like the mobile phone, which allows you to do the same functionalities as an old landline did, only from different places and in different contexts, is seen as more liberating than the stay-in-one place phone. Whereas a technological development that actually is perceived as a form of control—so, for instance, GMOs that have been made sterile into the next season so that you can’t save the seeds as your parents and grandparents may have done for eons—that will come across to farmers as a kind of imposed technology and not a liberating technology.

But I would caution against thinking that acceptance itself should be seen as an indicator that this is a good thing. With data in particular, we may be okay with it, but it’s related to the argument about GMOs. Many people have said, “Look, Americans have accepted the introduction of GMOs into agriculture, and 300 million who are okay with it can’t be wrong, therefore why shouldn’t everybody in the world accept it?” I think that whether we’ve accepted it in a deliberative way, aware of all the problems, or whether we’ve accepted it because we just didn’t know, or nobody gave enough time to think. I’m trying to make people more alert that mere acceptance isn’t a good enough indicator that something is ethical. You actually need to stop and think. Acceptance on the basis of ignorance or deceit is not the same thing as the acceptance on the basis of ongoing vigorous democratic debate.

MJ: You said it’s hard to predict what will happen with technology. One interesting example where people have been trying to predict what’s going to happen—or what technology is going to cost society—is around automation and self-driving cars. Even some tech companies say this is the next frontier and people will lose jobs. What do you think about that debate?

SJ: It’s not just about self-driving cars, but it’s about robotics and automation in general. I think there are certain things people would recognize as having benefits. For instance, there’s a huge consensus that life-prolonging drugs are a good thing, but that debate has happened independently of the moral and philosophical debate about the kind of quality of life that we’re buying for individuals with these additional 10, 20, 30 years we might be giving them.

My view of the right kind of debate would be that we should not decouple them. We should not decouple the discussions of who the losers are and what will happen to them from who the winners are and whether it will be beneficial. I’m all in favor of being able to imagine new frontiers with the aid of technologies, but I want a more compassionate approach that also recognizes that every time you’re talking about new frontiers, there will be certain kinds of costs attached. There will be people who don’t quite understand how to handle email who will decide to have private servers and then not know how to excuse themselves when it may be something as simple-minded as they were a little too far along in their lives to really figure out how to go back and forth between two different accounts. I’ve known cases of high-up government officials who preferred to use their personal laptops instead of the highly secured [ones] that their jobs required of them. Not because they weren’t smart, not because they weren’t good public servants, but because they simply were a little less skillful than maybe their grandchildren would have been in this case.

MJ: This sounds as if technological development is actually very Darwinian. Is it?

SJ: I want a society in which we don’t throw things and people away with quite the abandon we do, especially people. This is why the idea of disruptive technologies is quite dangerous, because you ought to be asking ourselves, what exactly are we disrupting? We should be disrupting structures that are unjust, we should be disrupting structures that were put into place without us ever having bought into them. We seem to buy into the rush into progress or what is imagined to be progress, without asking the questions: So what happens to the fact that not everybody is going to agree to this form of progress, and not everyone is going to have access to this form of progress, and not everybody is going to think that they want to give up their present way of living for the sake of achieving this particular kind of progress?

MJ: We talked about responsibility and asking more questions in general. Where do you think responsibilities lie: the individual, or the government?

SJ: Or maybe neither. My profession is usually described as an educator, so I do think that institutions like mine have a huge responsibility, and over the years I’ve become more committed to that. We need to make new kinds of citizens who reflect on the way in which technology enables and empowers things in their lives in the same way that citizens are trained to think about how law, politics, and government play a role in their lives. Ultimately, I am kind of a sucker for democracy, so I do think that what kinds of citizens we have in our societies are more foundational than what kinds of governments we have, and that the responsibility for self-government is ultimately with us. But we also have learned through a couple thousand years of democracy that democracies are only as good as people’s capacity to reflect on those questions. Then you cycle back to educational institutions and what they’re teaching and how they’re training people to think about their own condition. So I would put an enormous amount of responsibility on all the institutions that are responsible for making our thinking public. Of course, government has its role to play as well, but not in the form of risk regulator, more in the form of a public space where the right kinds of deliberative possibilities are created and fostered.

MJ: How do you think technology or how we think about technology will progress in the next few years or decades? Are you optimistic? Are you worried?

SJ: I think that we’ve made a lot of really fundamental discoveries and I think that frontiers we didn’t think we were going to be able to crack are, in different ways, in view. I don’t think we’re quite at the threshold of immortality yet, but we have to ask ourselves what we want out of immortality. Does it mean that the generation that achieves immortality just sits there forever, and we never propagate anymore or there’s nothing new that ever happens to the world ever again? One has to allow oneself a little time to reflect on those kinds of things. I don’t see the technological frontier as receding. I don’t see science as stopping, nor would I want it to—it’s a kind of creativity that I’m totally in favor of. But I also think that with all of that comes a huge potential for scaling things up too fast or heedlessly, not even because we failed to predict it. Climate change is there as a reminder that we can get richer and safer societies that are also consuming more and more to the point where the stability of Earth’s systems is being challenged at potentially catastrophic levels. I don’t think we can stop that. Just the very same worries I have about prediction on the positive progressive side—I mean, predictions that say we’ll be great, we’ll be fine—also apply to predictions that are too catastrophic. I’m not sure we get those predictions right either.

What I’ve advocated is just a more humble and self-aware approach to the ways in which we use technology, a wider diffusion of responsibility. Scientists not saying, “Oh, all we’re doing is the science and the regulators will pick it up”; lawyers not saying, “Oh the scientists will tell us the facts and we will make the decisions on the basis of those facts.” But a more widely shared burden on the part of society to keep asking, “What are our collective values, what kind of world do we want to bequeath to our children, and to what extent are these particular technological developments helping us go in those directions? I think that corporations, every bit as much as governments, social movements, and universities—we all have a role to play in asking those questions. I don’t think anybody should have a monopoly on that responsibility.

This interview has been edited for length and clarity.