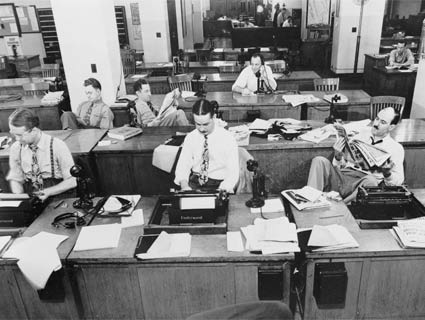

<a href="http://www.loc.gov/pictures/resource/cph.3c12969/">Marjory Collins</a>/Library of Congress.

On Monday, after a 14-month, $40 million dollar development process, the New York Times finally launched its much-heralded, much-debated paywall scheme.1

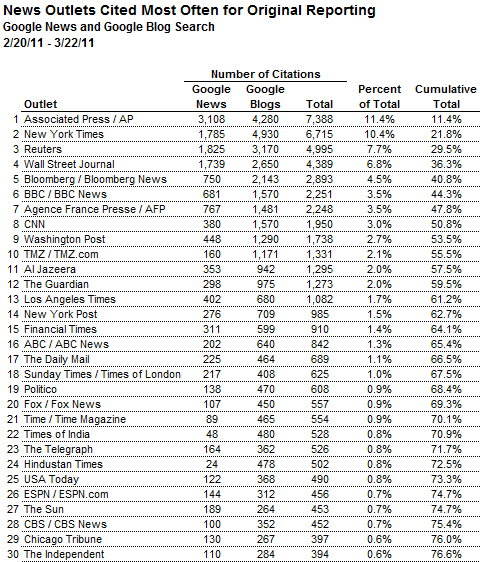

Reviews are mixed.2 But perhaps the most interesting response to the paywall was from the Times‘ stats expert, polling guru Nate Silver. Instead of attacking or defending the concept of the paywall head-on, Silver took a different tack: attempting to quantify what, exactly, the $15 per month would buy you. To do that, Silver put together a ranking of how much original reporting the 260-odd top journalism outlets actually produce. The results, as you might expect, made the Times look like a pretty good value. The eight top organizations ranked—the Associated Press, the Times, Reuters, the Wall Street Journal, Bloomberg, the BBC, AFP, and CNN—were responsible for more than half of the original reporting Silver catalogued.3

This sort of thing—something that allows even a small outlet like Mother Jones to compare ourselves empirically with our competitors—is just what journalism needs. And Silver is a genius at figuring out interesting and new ways of using statistics to look at just about anything. But I—and, as it turns out, Silver himself—would caution against relying too much on the rankings lower down on his list.

What Silver did.

Here’s how he put the rankings together:

I’ve tracked the number of times that the publication’s name has appeared in Google News and Google Blog Search over the past month, followed by the word “reported.” For instance, to track the number of citations for the Chicago Tribune, I’d look for instances of the phrase “Chicago Tribune reported.” (In some cases, I’ve permitted multiple search terms for the same news outlet—for example, both “BBC reported” and “BBC News reported.”)

[…]

I’ve tallied the citations for 260 different news outlets. The list includes all blogs ranked in the Technorati top 100, all newspapers ranking in the top 100 in daily United States circulation, all English-language newspapers ranking in the top 100 in global circulation, and all news sources in the Memeorandum top 100, which contains a mix of traditional and “new media” sources. I’ve also included another 25 or so outlets, like The Financial Times, that didn’t meet any of these criteria, but which belong in the discussion. The list isn’t absolutely comprehensive, but it ought to do a pretty good job.

There are a number of problems with this methodology.

Silver acknowledges in his post that searching for “______ reported” is not going to return a comprehensive list of reporting citations:

Obviously, there are other ways that a news outlet’s reporting might be referenced: “according to The Guardian” as opposed to “The Guardian reported.” So this won’t capture every time that an outlet’s reporting is cited; the idea, instead, is that it should be a representative sample.

Silver also acknowledges the problem of citation:

It is possible that there is some bias among traditional news organizations in their unwillingness to cite, or to properly attribute, reporting by blogs and other types of online outlets. In the count for citations at Google News, which mostly consists of traditional outlets, the online organizations registered just 3 percent of the total citations. Even among the citations at Google Blog Search, however—where the tables might be turned the other way—they accounted for just 6 percent.

These are far bigger issues than Silver admitted in his initial post. An extremely common convention in the blogosphere is to cite reporters—especially those for well-known online outlets—by name. That’s something that traditional outlets rarely do. The same goes for the use of the present tense—”reports” rather than “reported,” “says” rather than “said.” Often, it’s not “TPM reported”—it’s “Brian Beutler reports.”

This doesn’t necessarily call the main takeaway from Silver’s chart into doubt—no one disputes that the AP and the Times produce far more original reporting than TPM and HuffPo. But it does mean that the rankings at the bottom of the chart—really anywhere where the number of citations are in the tens or hundreds, rather than thousands—probably shouldn’t be taken all that seriously. Silver says that wasn’t his intention, anyway. Here’s part of what he told me in an email4:

1. The data—and I hope this was reasonably clear from the context of the original piece—was intended more to address the general point about the relative volume of reporting done by online and print outlets (and by the New York Times in particular) than to be an authoritative ranking of the top 260 news outlets, which would require a more thorough approach…And you’re right that the uncertainty is going to be concentrated more toward the bottom portion of the list. There’s even some instability in the number of hits that Google will return for the same search on any given day, which means that there’s a de facto “margin of error” here.

2. I do however feel fairly confident that different variations on the methodology would not substantially change the conclusion that online outlets account for a small share of reporting (with some exceptions in fields like technology and celebrity news).

There is also some other evidence along these lines—for instance, a very detailed study by the Pew Research Center on original reporting in Baltimore which really probed into the ultimate source for each story and found that “new media” only accounted for something like 4 percent of it.

3. I also feel fairly confident that the NYT’s position would not change much…

Original reporting varies widely in quality and usefulness.

Another problem with the methodology is that there are two types of “original reporting.” The first is reporting on something that other outlets are also covering. You might be “first” with the latest crazy quote from Politician X, but another outlet is going to have it 90 or 120 seconds, or maybe 15 or 20 minutes, later. Much of, if not most, US political reporting falls into this category. Many news developments—like this week’s collapse of the California state budget deal, for example—are still covered by multiple outlets. It’s not like the early 1990s, when every regional paper worth its salt had a DC bureau and a statehouse team, but there is still a lot of duplicative coverage.

This brings in the issue of scale. If a blogger is looking to respond to a big news event, she’s more likely than not to cite the AP story or the New York Times story or the Los Angeles Times story—even if the Sacramento Bee‘s reporter had her story up first, because those are the big, dominant outlets. (Meanwhile, a Times reporter is rarely if ever going to cite the Bee‘s coverage of an event that a Times reporter also attended—even if the Bee had the better story first.) In Silver’s system, the Times and the wire services get hugely rewarded (with multiple “_____ reported” credits) for covering press conferences or conference calls or votes or speeches that a dozens or more of their colleagues might attend. And there’s not even a guarantee that the person who got the mini-scoop by getting her story up first gets any credit at all. (Silver says, “I think you’re also right that local reporting tends to get the shaft, but the New York Times does a lot of local reporting.”)

In the meantime, the second type of original reporting—the kind that if you don’t report it, no one else will—gets the shaft. This type of scoop is the stock-in-trade for smaller outlets, including Mother Jones. We need to offer people something they can’t from the Times or the AP if we want it to break through and have impact. Silver’s methodology also doesn’t reward more detailed or more informative stories. In his system, the New York Times and the Los Angeles Times will often get multiple blog citations (and points) for their accounts of events the AP covered 35 minutes earlier, while Ken Silverstein’s Foreign Policy masterpiece on Teodorin Obiang (available for free online), which has more original reporting in it than a pile of statehouse or Capitol Hill stories, will get no credit at all.

At the same time, a citation for the Times‘ reporting from Libya or Afghanistan, where there actually is a shortage of coverage, counts the same as a citation for an AP report on Sarah Palin’s latest pearl of wisdom or TMZ’s latest breaking news on Justin Bieber. (I’m not suggesting that Palin and Bieber shouldn’t be covered at all, just that there are a lot of people doing it.)

I do, of course, have a dog in this fight—Mother Jones values original reporting, and we try to produce as much of it as we can on a small nonprofit budget. And sure, any ranking system implies making subjective judgments about what we want to reward and what we want to punish. But right now, I’m not convinced that Silver’s full rankings accurately measure real value.

So what should happen?

Part of the problem, of course, is that Silver is only one man, he needs to get paid for his time, and he already has a lot on his plate. It’d be nice to see a foundation interested in journalism—the Knight Foundation, say, or Google.org—invest some time and money to expand and rework the rankings. It would be great to see media outlets competing to produce more and better original reporting, and something like these rankings could spur that competition and serve as a useful measuring stick for readers and potential funders.

Silver’s pollster rankings were unquestionably revolutionary in the polling business—and a lot of pollsters resented him for it. If someone could offer something similarly thorough and rigorous for journalism, it wouldn’t necessarily be popular, either. But it’d be an immense public service, and it could transform the industry if outlets start competing on something other than who can post the most LOLcats, get the most cheap pageviews, or cut costs the fastest.

Silver thinks the door is wide open for others to take the lead on reporting rankings. “While it’s possible that I’ll do some follow-up on this,” he says, “it is not a case where (as I would argue is the case for the pollster ratings) there is all that much technical expertise required. Literally, we’re just typing things into Google and counting them. I’d strongly encourage people to try variations on our approach.”

The fierce debate over the paywall is a sign that people have vastly divergent views about the future of media. More measurement and accountability will only help light the way.

1) The whole system is complicated (there’s a 29-question FAQ on the Times site), but the basics are as follows: you get to read 20 items a month for free before you start paying. After that, you have to pay at least $15/month (more if you want to read the site using the Times‘ tablet app or use the “Times Reader” software). You can also read the site for free if you click through from Facebook, Twitter, search engines, blogs, or other social media—even if you’ve hit your 20-article limit.

2) Techcrunch says the Times built “the world’s stupidest paywall.” Cory Doctorow, a blogger, novelist, and copyright reform activist, calls the paywall either “wishful thinking” or “just crazy.” Reuters columnist Felix Salmon is also critical. But Slate‘s Jack Shafer says that if the paywall increases the Times‘ revenues “appreciably,” he’ll “be happy to call it a success.” AdAge’s Simon Dumenco knocks Doctorow for his “knee-jerk” criticism. And even the Onion, in its own way, has weighed in on the Times’ side, with an item entitled “NYTimes.com’s Plan To Charge People Money For Consuming Goods, Services Called Bold Business Move.”

3) It’s really too bad the rankings aren’t that reliable, because they actually made Mother Jones look fairly good—at least in relation to similarly-sized outlets and other national print magazines. We came in 113th of 260 outlets. Just nine of the online-only outlets (including specialty press, like TMZ, Engadget and Techcrunch) are ahead of us, and we’re ahead of TPM, Newsday, the Daily Caller, Gawker, the Saint Paul Pioneer-Press, the International Herald-Tribune, National Journal, Le Monde, New York magazine, ProPublica, Slate, the Daily Beast, Media Matters, Foreign Policy, Salon, Vanity Fair, The Atlantic, Politics Daily, the New Yorker, the Weekly Standard, Real Clear Politics, Grist, and PBS. We’re just behind Roll Call and Gallup in the rankings, and among national print magazines that regularly cover politics, only Time, Rolling Stone, Newsweek, The Economist are ahead of us. Imagine if we could really rely on those numbers!

4) Here’s what Silver said about the paywall itself:

It’s true that just because outlets like the NYT have more bills to pay and need to generate more revenue per customer to break even does not necessarily beget the pay model as the optimal solution. I’m pretty agnostic as to whether the pay model is the right approach. Nevertheless, this is not a problem faced by outlets that concentrate only on reporting types of news that involve relatively high profit margins.

The nightmare scenario has nothing to do with the New York Times per se, but instead involves news outlets pulling out of reporting on areas like international news and statehouse news that are hard to monetize, and having more stories go unreported, underreported, or misreported as a result.