Niall Carson/PA Wire via Zuma

On Monday evening, Facebook CEO Mark Zuckerberg got on a Zoom call with the leaders of several civil rights organizations to discuss what Facebook had become over the preceding days: a platform for President Donald Trump’s most incendiary and violent words. By the time the advocates spoke with Zuckerberg and his top deputies, Facebook was already roiling with internal dissent over its role spreading the president’s messages targeting predominantly black protesters.

“This is one of those moments where the choice is very clear,” says Steven Renderos, executive director of MediaJustice, a group that has spent years lobbying Facebook to prioritize the wellbeing of people of color who use its services, “You either support black lives or you support being a platform for white supremacy.”

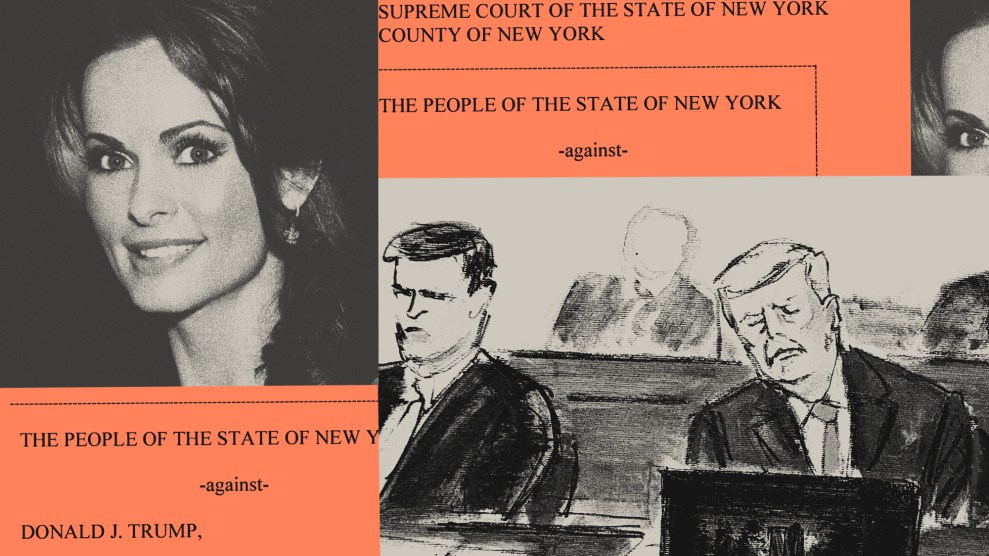

This moment of choosing came quickly as events of the past 12 days pitted protesters against the inflammatory remarks of the president. Through them, Zuckerberg led his company down a path that not only civil rights advocates but even his own employees believe history will judge as the wrong one. Last week, after Twitter fact-checked the president’s false claims that voting by mail leads to fraud, Zuckerberg quickly called into Fox News to let it be known that his platform would not block the president’s posts. Facebook is not an “arbiter of truth of everything that people say online,” he said. The next day, as protests grew over the death of George Floyd after a cop spent nearly nine minutes kneeling on his neck, Trump pushed the envelope further, tweeting the words of a former Miami police chief who threatened civil rights protesters in 1967 by saying “when the looting starts the shooting starts.” Twitter affixed a tag to the tweet indicating it violated its policy against glorifying violence. But when Trump put the same sentiment on Facebook, Zuckerberg left it untouched.

That weekend, cell phone cameras documented numerous instances across the country of police using excessive and sometimes unlawful force against protesters, journalists, even people sitting on their front porches. New videos of police abuse accumulate daily. On Facebook, Trump continues to celebrate “overwhelming force” and “domination” by law enforcement. Zuckerberg’s call with civil rights groups coincided with federal security forces dispersing peaceful protesters from outside the White House with tear gas so that President Donald Trump could wave a Bible before the cameras, the administration’s most obviously authoritarian maneuver in an already turbulent week.

“We saw a president tear gas American citizens so he could do a photo op outside of church, and the messages that he delivered through Facebook were consistent,” says Henry Fernandez, a senior fellow at the Center for American Progress Action Fund and co-chair of Change the Terms, a coalition of civil rights and issue advocacy groups pushing tech companies to fight hate speech. “He called for violence against American citizens to help normalize that violence, and then he engaged in violence against American citizens. This is textbook how national leaders exploit and endanger their people.”

Civil rights groups have spent years pushing Facebook to take the needs of minorities and women seriously—reasoning, cajoling, sometimes flattering Facebook to get its executives to rein in hateful content. After Trump made his posts pushing shootings, activist organizations attempted to lodge complaints at the company. “The lines of communication that a lot of civil rights groups have into Facebook were just ineffective in this moment,” says Renderos. “It became clear pretty early on that it was going to be the C-suite that was going to be making a decision around [Trump’s posts] and more explicitly, Mark Zuckerberg.”

On Monday night, the groups issued a statement of defeat. “We are disappointed and stunned by Mark’s incomprehensible explanations for allowing the Trump posts to remain up,” read a joint statement from three prominent civil rights advocates—Sherrilyn Ifill of the NAACP Legal Defense Fund, Vanita Gupta of the Leadership Conference for Civil and Human Rights, and Rashad Robinson of Color of Change—issued after their conversations with Facebook’s leadership. “He did not demonstrate understanding of historic or modern-day voter suppression and he refuses to acknowledge how Facebook is facilitating Trump’s call for violence against protesters.”

“We’re grateful that leaders in the civil rights community took the time to share candid, honest feedback,” a Facebook spokesperson said of the call. “It is an important moment to listen, and we look forward to continuing these conversations.” Renderos, who was not on the call, described this moment as “a new low” for relations between Facebook and the civil rights community.

The next day, Zuckerberg defended his decision to his own employees, many of whom had staged a virtual walkout from their remote workstations. In a virtual town hall, he held firm that leaving Trump’s post up was the right call, invoking, as he has for months now, the idea that Facebook is a free speech zone even though Facebook is a private company whose algorithms determine what content people see. Many workers disagreed. “Why are the smartest people in the world focused on contorting and twisting our policies to avoid antagonizing Trump?” one asked Zuckerberg pointedly.

Zuckerberg’s decision has prompted employees to quit. “Today, I submitted my resignation to Facebook,” software engineer Timothy Aveni wrote in a post on the platform whose workforce he was leaving. “Mark always told us that he would draw the line at speech that calls for violence. He showed us on Friday that this was a lie. Facebook will keep moving the goalposts every time Trump escalates, finding excuse after excuse not to act on increasingly dangerous rhetoric.” Facebook and its CEO, he concluded, are “on the wrong side of history.”

“He was the primary voice challenging Twitter on television,” says Fernandez. “He was an apologist against Twitter’s actions on behalf of Donald Trump. That is who Zuckerberg has become.”

Facebook’s arrival at this moment was a long time coming. But it didn’t have to be like this. For nearly a decade, advocates have warned Facebook that the platform was being used as a weapon of hate against their communities. In some instances the problem was hate speech, in others the doxxing of Black Lives Matter activists. In 2016, Facebook became a source of disinformation and voter suppression, much of it directed toward African Americans. Facebook allowed its events pages to be used to organize anti-Muslim rallies and the white supremacist protests in Charlottesville that turned deadly. As Facebook’s powers of surveillance grew, so did its relationships with police departments. All the while, it transformed into an advertising business, bringing in tens of billions of dollars annually, while failing to apply the civil rights laws that could have prevented those ads from becoming a bastion of discrimination. It was not until 2018, and after years of effort, that Facebook began to acknowledge and try to mend these issues. It agreed to a civil rights audit of its platform, which spawned new rules particularly to prevent voter suppression, and began to crack down—albeit inadequately—against white supremacists on the platform.

That same year, Facebook was caught red-handed in two major scandals, which prompted more promises to change. The first was the Cambridge Analytica affair, where the company was careless with users’ personal data. The second was viscerally worse: reporters and human rights investigators revealed that the platform had been used for years by Myanmar’s government and military to push a genocide against the country’s Rohingya Muslim minority. “We weren’t doing enough to help prevent our platform from being used to foment division and incite offline violence,” Facebook acknowledged in November 2018, promising to update its “credible violence policy” and “remove misinformation that has the potential to contribute to imminent violence or physical harm.”

But the era of collaboration between civil rights groups and the tech giant following the revelations of Cambridge Analytica and election interference was short-lived. Instead, Facebook worked to curry favor with the Trump administration, presumably out of fear of regulation that would hurt the company’s bottom line. In August 2016, Facebook handed oversight of its trending stories page to an algorithm, which guided millions of users to fake pro-Trump stories, a coup for complaining conservatives. After the election, under constant pressure from Trump, his campaign, administration, and Republican lawmakers to maintain a conservative-friendly platform, Facebook decided that its civil rights audit would be paired with one looking into anti-conservative bias so as not to anger Republicans. Last fall, Zuckerberg announced that politicians would be allowed to lie in Facebook posts and advertisements. While he promised continued guardrails against voter suppression, staying on Trump’s good side never entails one policy decision; it’s a never-ending series of tests. And the content that Trump put on Facebook this week was the biggest test yet.

Despite the company’s rules against posts that could suppress the vote, Trump’s lies aimed at dissuading people from casting absentee ballots during a pandemic remain on the platform. Despite policies against inciting violence, the president’s words—”when the looting starts, the shooting starts”—have also stayed up.

“Just as in Myanmar, Facebook is choosing to actively participate as the megaphone for that hate and violence,” says Fernandez, recalling the words of a United Nations investigator who said that Facebook had “turned into a beast” in Myanmar. “I think we’re seeing the same thing here.”

As the civil rights advocates look ahead to November’s elections, they worry how much further Facebook will bend its own rules to appease Trump. What will happen as the policies Facebook put in place to safeguard elections “get tested by Trump, Trump’s campaign, the PACs that are supporting Trump? What happens when when we start seeing those kinds of activities pop up again? Will they choose to do nothing?” wonders Renderos. “Because that has been what what they’ve told us they will do based on the events of this past week.”

“We’re stepping into a moment in which the elections are largely going to be contested and fought online,” he says, referencing the likelihood that shelter in place orders aimed at stemming the spread of the coronavirus will return before the fall. The biggest battlefield is Facebook.

Even as groups worry about what Zuckerberg will do in the next five months, the events of the past week—a pandemic, economic crisis, the killing of Black people at the hands of police and white vigilantes, protests and violent clashes between the state and the people—standout to them as a moment that will go down in history.

“In the long through line of history, I think there have been moments in which there are these kinds of forks in the road of the right thing to do and the wrong thing to do,” says Renderos. “This will be one of those moments where you look back and you say Mark Zuckerberg was on the wrong side of history here, just like we look back and we say Bull Connor as that sheriff out in Birmingham was wrong.”

It’s unclear what Zuckerberg is thinking about his place in history, or if the question keeps him up at night. But it’s sure to be a topic of discussion for some time. What drives this powerful person whose decisions affect everyone on the planet? How did a Harvard dropout become one of Trump’s most important defenders? Fernandez finds himself wondering if the Facebook cofounder would be making different choices if he had finished college before starting Facebook. “He could have read about how history looks down upon the enablers of fascism, that people remember Henry Ford not just for making cars but for his proximity to Hitler,” he says.

Watching Facebook’s policy of appeasement toward Trump in recent years, multiple news reports have documented the influence of Joel Kaplan, a top Facebook executive and prominent conservative, whose sway over Zuckerberg appears to have grown. The Trump administration has also exerted tremendous pressure on Facebook. The president and his campaign regularly accuse the platform of bias, putting Zuckerberg in a position of constant accommodation. Attorney General Bill Barr has reportedly opened an investigation into Facebook which, if he chose to follow through on, could hurt Zuckerberg’s bottom line.

Facebook is a company of more than 2 billion users, not just a website and an app but also Instagram, Messenger, and WhatsApp. It recently tried to start its own cryptocurrency. Its path to more growth is one of uninhibited expansion, and any anti-trust action would threaten both Zuckerberg’s ambition and monopoly. (He previously called Sen. Elizabeth Warren’s plan to break up big tech companies an “existential” threat that he would “go to the mat” to fight.) These theories see Zuckerberg as more acted upon than actor, more pawn than global power player.

More theories are sure to emerge about what is driving Zuckerberg, whose own words leave a hodgepodge of clues: he told his employees he personally didn’t like Trump’s reference to putting down riots in the 1960s but also didn’t find it an exhortation to violence. Longtime Facebook critic and Wired columnist Siva Vaidhyanathan on Wednesday wrote that he had long presumed Zuckerberg was an idealist who regretted that his platform had become a weapon of genocide in Myanmar and a tool of strongmen in countries such as the Philippines. And yet as he allows the same behavior in his own country, Vaidhyanathan, a professor of media studies that the University of Virginia, says he has now come to a different conclusion. Zuckerberg is not an idealist nor is his paycheck driving his decisions; instead, Zuckerberg is driven by power. He cites the fact that the Facebook CEO named one of his children after the Roman emperor Augustus and reminds readers that he has been known to yell “Domination!” at staff meetings—the same phrase Trump has been repeating all week.

“In the long run, he believes, Facebook’s domination will redeem him by making our lives better. We just have to surrender and let it all work out,” Vaidhyanathan concluded. In that view, Zuckerberg is no one’s pawn—he is doing what he thinks he must to dominate.

But the psychological state of a historical figure is not what defines their place in history. Whichever theory of Zuckerberg’s internal motivations proves right, the decisions he made this week will define him.