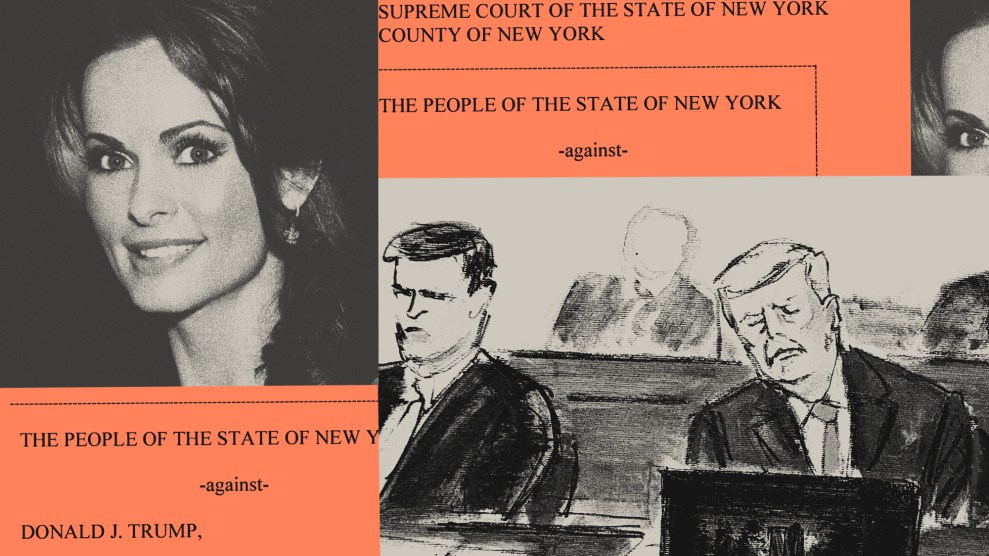

A crashed Tesla from an accident in 2018.KTVU via AP

Ah, the Super Bowl is upon us. Like most American news organizations, we are obligated to offer some predictions today, and the time of the game, for easy web traffic. The game is on at 6:30 p.m. Eastern Time. I think the Philadelphia Eagles linebackers will try to target Kansas City Chief’s go-to tight end Travis Kelce unsuccessfully. I think the Chief’s defensive line will attempt to contain Eagles’ prolific rushing quarterback Jalen Hurts unsuccessfully. And I think an ad from Dan O’Dowd about Tesla’s self-driving cars is going to try to cause a mild shitstorm (successfully).

O’Dowd is a safety activist and California tech entrepreneur. He has spearheaded a campaign, spending millions, to get Tesla’s fully self-driving cars off the road. In his pursuit, this year O’Dowd bought a Super Bowl commercial attacking Elon Musk’s car company’s technology that will air in Washington, DC, and several other state capitals, including Albany, Atlanta, Austin, Tallahassee, and Sacramento, according to the Washington Post.

Watch The Dawn Project’s #SuperBowl ad demonstrate critical safety defects in @Tesla Full Self-Driving. 6 months ago we reported FSD would run down a child. Tesla hasn't even fixed that! To focus their attention, @NHTSAgov must turn off FSD until Tesla fixes all safety defects. pic.twitter.com/AxJbN5oOSr

— Dan O'Dowd (@RealDanODowd) February 11, 2023

The ad by his advocacy group—which calls itself the Dawn Project—shows a Telsa with its “Full Self-Driving” mode turned on hitting a child-size mannequin. It shows a Tesla hitting a stroller. It shows a Tesla driving on the wrong side of the road. It shows a Tesla blowing through areas marked with “do not enter” signs and blazing past stationary school buses with their flashing “STOP” sign out.

The purpose of the ad, and the Dawn Project, is to try to get Congress to regulate Tesla’s self-driving cars. The Dawn Project’s broader mission is demanding technology “computers that are safe for humanity” and “software that never fails and can’t be hacked” across a range of areas.

The group’s footage is only one of many videos that highlight Tesla’s relatively consistent safety failures. In January, the Intercept reported on a video showing a Tesla using driving assistance features abruptly stopping on the Bay Bridge, resulting in an eight-car crash that injured nine people.

As the Post reports:

Last year, Tesla issued a cease-and-desist letter after O’Dowd’s group, the Dawn Project, published footage of the cars repeatedly striking child-size mannequins. A test run by a prominent Musk supporter included a real child to show the car recognizing them and stopping. O’Dowd has offered to run the test with Musk or any of his other critics in-person, to prove the car is making the mistakes without any tampering.

Since 2016, The National Highway Traffic Safety Administration has investigated 35 crashes in which Tesla’s autopilot or Full Self-Driving mode was being used. According to the agency’s own data those features were involved in at least 273 Tesla crashes between July 2021 and June 2022.

In the meantime, my colleague Abigail Weinberg wrote about one of the safest alternatives to auto-pilot that already exists for those who don’t want to drive: public transportation.