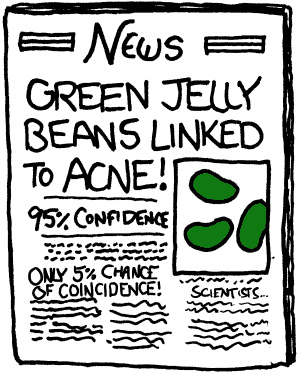

A blue-ribbon committee of the American Statistical Association spent a year arguing about what a p-value is, and finally coughed up the following explanation aimed at laymen:

Informally, a p-value is the probability under a specified statistical model that a statistical summary of the data (for example, the sample mean difference between two compared groups) would be equal to or more extreme than its observed value.

Raise your hand if you understood a word of that. Anyone?

Among experts, the long-running argument about p-values is abstruse and knotty. But even when you ignore the deep philosophical issues, it turns out that coming up with a close-enough explanation for average Joes and Janes is tough too. Over at 538, Christie Aschwanden hints at the  problem: statisticians aren’t so much interested in explaining what a p-value is as they are in busting myths and explaining what it isn’t:

problem: statisticians aren’t so much interested in explaining what a p-value is as they are in busting myths and explaining what it isn’t:

A common misconception among nonstatisticians is that p-values can tell you the probability that a result occurred by chance. This interpretation is dead wrong, but you see it again and again and again and again. The p-value only tells you something about the probability of seeing your results given a particular hypothetical explanation — it cannot tell you the probability that the results are true or whether they’re due to random chance. The ASA statement’s Principle No. 2: “P-values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone.”

Personally, I’ve never been too happy with this approach, but I’ll leave that aside. I’m probably just wrong. But how about this nickel explanation?

If you’re testing a hypothesis with only a limited set of data (for example, proposing that someone is the leader of a presidential race by relying on a survey of only 1,000 people) a p-value is, informally, the probability that the small dataset validated your hypothesis merely by chance.

I suppose that’s wrong too in some kind of barely comprehensible way. It always is. But close! And, perhaps, reasonably comprehensible?