Gracia Lam

Cindy Bethel was 6 when her babysitter’s neighbor started molesting her. Worried what else would happen if she told her parents, she confided in her stuffed panda instead. Sometimes she acted out the abuse with Barbie and Ken dolls. A few years later, the same teen neighbor raped her on a woodpile outside his house. She didn’t tell anyone about the assault until long after she moved away from her Ohio hometown.

Even if she had spoken out, investigators may have struggled to get her full story. Young children often say what they think adults want to hear, and kids are easily influenced by an interviewer’s word choice, tone, and body language—all of which can lead them to provide false information. Children’s testimony helped condemn innocent people during the Salem witch trials. And in the 1980s, panic about alleged satanic abuse at daycare centers spread when social workers nudged kids to accuse their caregivers. A series of studies in the 1990s, led by a psychologist at Cornell University, found that prolonged suggestive questioning caused more than half of 4-to-6-year-olds to recall details about life experiences that had never occurred.

Even well-trained interviewers make mistakes, like forgetting to ask open-ended questions, says Zoe Klemfuss, an assistant professor at the University of California, Irvine, who studies children’s memory and forensic interviews. “You’re cognitively having to do so many things at once, while being sympathetic to the child, while getting legally relevant information,” she says. The stakes are high: Tens of thousands of kids testify at criminal trials every year.

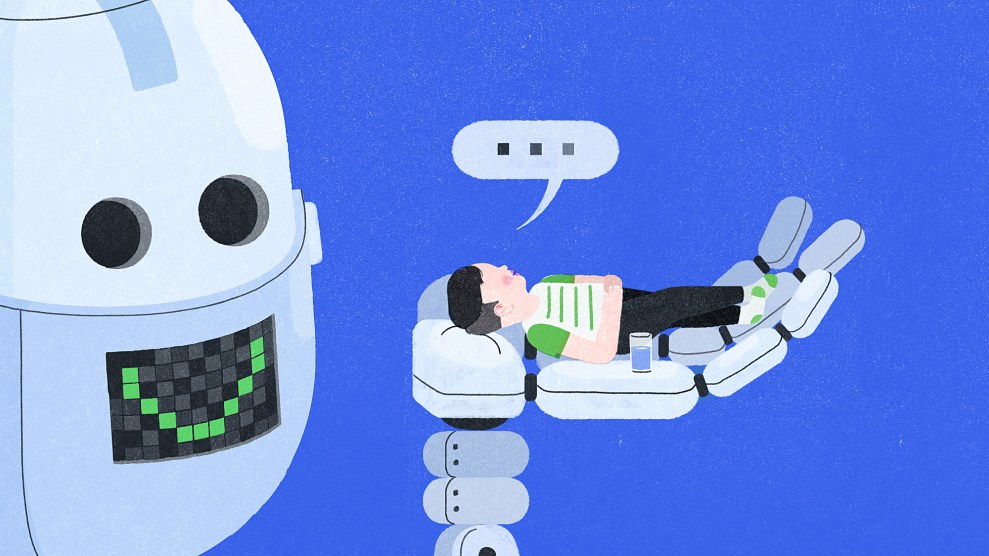

Bethel never pressed charges against her abuser. As an adult, she found her calling as a roboticist and began studying children’s interactions with robots. During one experiment she led as a postdoc at Yale, she noticed that preschoolers tended to reveal their secrets to robots just as often as they did to humans, sometimes with less prompting. Children trusted their artificial friends much in the same way Bethel had confided in her stuffed animal and dolls. Which got her thinking: What if a machine like that could be deployed during forensic investigations?

Robots offer a unique advantage over human interviewers: They’re not assumed to be judgmental. Researchers at the University of Southern California found that military service members were significantly more likely to disclose PTSD symptoms to a virtual avatar named Ellie than they were in a post-deployment health survey.

Bethel, now a professor at Mississippi State University, is testing whether a similar strategy could help kids open up. In one of her recent experiments, a child entered a room and met a 2-foot-tall blue-and-white robot called a Nao. It introduced itself as Will and began asking about life at school. A forensic interviewer listening in another room devised follow-up questions. Bethel found that more than half the kids said they could confide in the machine in a way they couldn’t with a human.

Courtesy Cindy Bethel

Courtesy Cindy Bethel

Some robots are even more lifelike: On a recent morning, I used my laptop to video call the founder of the Dallas-based company RoboKind. He angled his computer so I could chat with a redheaded robot named Milo, which was programmed to work with autistic kids, and which could manipulate its eyebrows, mouth, and forehead. “I am so happy to see you,” the robot offered. Bethel deployed Milo in one of her experiments: A video shows a high schooler leaned forward in his chair while talking to Milo about bullying, recounting how a friend had to drop out of school after being teased relentlessly. He seemed comfortable spilling his heart to the machine, even admitting that he didn’t feel like one of the smart kids who could “talk proper.”

No one has yet studied whether children are more likely to tell robots about abuse or other crimes. Given that many kids today are familiar with WALL-E, Baymax, C-3PO, and even Alexa, asking them to chat comfortably with a robot face to “face” might not be too much of a stretch. Still, there are ethical concerns. Children might not realize the robot will share their secrets with investigators hidden from view; smart toys like Mattel’s Hello Barbie have faced criticism for passing recordings of unknowing children to third parties. Programming a robot to collect children’s heart rates or breathing speeds may breach their privacy, Bethel says.

But Bethel theorizes that machines could lead to more accurate interviews. In another experiment, children viewed a slideshow depicting events of a crime and were later asked to recall the details to a human or a robot interviewer. Both the person and the bot injected false information into the conversation, but the kids were less likely to be misled by the robot.

Bethel is now exploring whether children are more comfortable with machines closer to their own size—like the 4-foot-tall Pepper, which rolls around and takes selfies with travelers at an airport in New Zealand. When I met a Pepper in a showroom at Singularity University in Santa Clara, California, the robot made sure to clarify that it’s not human. “But that shouldn’t keep us from chatting,” it said. “I am your friend—you can tell me anything.”

I could understand how someone might reveal intimate thoughts to a robot, in the same way it can be easier to ask Siri a dumb question than to ask a friend. Sure, Pepper didn’t understand everything I said, but there was something reassuring about it standing there, with its round, clementine-size eyes and friendly voice, looking so eager to listen.