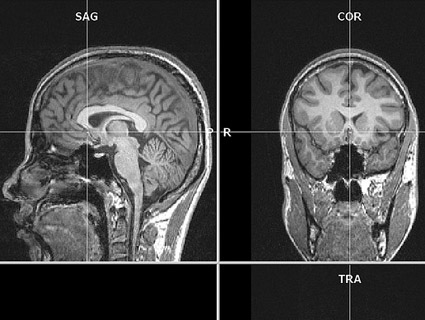

an MRI brain scan<a href="http://www.flickr.com/photos/mohapj/3503554759/">Mohan P J</a>/Flickr

Science and the military have historically made creepy bedfellows, with military curiosity about neuroscience leading the pack. Yet it’s no secret that since the early 1950s, the US military has had a vested interest in harnessing cutting-edge developments in neuroscience to get a leg up on national defense (a la well-publicized failures like Project MK-ULTRA). In 2011, the Defense Advanced Research Projects Agency (DARPA), the Pentagon’s research arm credited with, among other things, spearheading the invention of the internet, had a budget of over $240 million devoted to cognitive neuroscience research alone. From brain-scan-based lie detection to memory-erasure pills, some of the technologies are, at first glance, simply the stuff of sci-fi. But an essay published in the March issue of PLoS Biology tells a cautionary tale of high-tech neuroscience developments on the horizon that “could be deployed before sufficiently validated.”

The two authors, Michael Tennison and Jonathan Moreno, are no strangers to the broader implications of science; both are bioethicists, and Moreno, a professor at the University of Pennsylvania School of Medicine, has been a part of multiple government advisory bodies, including President Obama’s bioethics commission. “They see me as an honest broker,” says Moreno. “I worry about the ethical questions behind a lot of these technologies, I’m left-leaning, but I’m no pacifist—I have kids, and I think we do have to worry about national security.”

A lot gets said about scientific research that’s so-called “dual-use,” e.g., its potential for good is matched or outmatched by its potential to do harm. Case in point: the recent H5N1 hubbub, where Dutch and American scientists made a potentially dangerous airborne strain of the already-dangerous bird flu virus, but only in the interest of “preventing a pandemic.” Similarly, Moreno runs through recent developments in neuroscience, connecting them to their well-funded, though still highly speculative, DoD research goals—as well as the knotty legal and ethical questions these experimental technologies suggest. “Neuroscientists haven’t had the atom bomb moment that Einstein and Oppenheimer had, they haven’t even had the bird flu moment; but that time is fast-approaching,” Moreno says. Here are some of the top neuroscience developments that Moreno, and DoD, is keeping an eye on—and why he thinks we should care.

A lie detector that can’t lie? Standard polygraph lie detector tests use biological markers like heart rate, respiratory rate, and blood pressure as proxies for deception—but anxiety has long been suspected to be a fickle measure of truth. As early as 2000, DARPA began funding research using functional magnetic resonance imaging (fMRI) scanners to establish brain activation differences between lying and truth-telling. Now, according to Professor Hank Greely, Director of Stanford’s Center for Law and the Biosciences, there are more than 30 peer-reviewed studies establishing statistically significant differences in activation patterns between honesty and deception. And DoD has taken notice.

In 2007, the “DoD Polygraph Institute” changed its name to the “Defense Academy for Credibility Assessment.” And in 2008, a National Research Council (NRC) report concluded that “traditional measure of deception detection technology have proven to be insufficiently accurate.” The report then goes on to describe the merits of new, fMRI-based brain scan technologies and their potential for use in lie detection. Around the same time, private companies began cropping up to provide high-tech lie detection services. According to their rather hokey company website, “No Lie MRI” is currently marketing themselves extensively to the Justice Department, DoD, and the Department of Homeland Security. And another company called Cephos has had its services presented in at least two court cases to date.

While some scientists dispute the accuracy of the scans (Greely pointed out that certain obscure countermeasures, like purposely wiggling your toes, have been shown to throw the brain activation patterns way off), there is a clear defense interest in developing “indisputable” lie detection. But Moreno and Greely both stress that increased prevalence of brain scans could bring up potentially dangerous legal issues; required scanning could constitute unreasonable search and seizure, or even violate 5th amendment protections against self-incrimination. “People have begun to speculate on these issues, but right now it’s really unclear,” says Greely. “Alternatively, some have argued that we’ll need something new—that the existing Bill of Rights doesn’t protect mental privacy adequately enough in the face of these emerging technologies.”

Can a “love hormone” double as a “truth serum”? Additionally, DARPA’s FY2012 budget states a plan to “initiate investigations into the relationship between…neurotransmitters such as oxytocin, emotion-cognition interactions, and narrative structures.” Oxytocin, colloquially dubbed the “love hormone,” exploded onto the research scene in the 90s for its demonstrated involvement in emotional behaviors such as parenting and pair-bond formation (it is secreted in the brain by breastfeeding moms and during sex). Interestingly, it’s also known to increase feelings of trust and compassion in human subjects dosed with the hormone through their noses, a highly-speculative but possible boon for interrogators looking to extract info from otherwise tight-lipped prisoners.

Though still in murky scientific territory, using oxytocin to get confessions from prisoners would likely be a violation of international law. “Under Common Article 3 of the Geneva Conventions, you can’t subject any prisoner to inhuman or degrading treatment or punishment, and obviously you can’t torture them,” says George Annas, Professor of Health Law, Bioethics & Human Rights at the Boston University School of Public Health. “If used in a manner against their will, this would be a totally break with international law.” But according to Lesley Wexler, Professor of International Humanitarian Law at the University of Illinois, there are many more gray areas to consider. “There is quite a debate about what humane treatment includes, and I would imagine whether oxytocin falls in that category is an open question,” she says. Wexler stresses, however, that this is a matter of domestic discretion—not international law—and interrogation of prisoners of war (or the more nebulous “unlawful enemy combatants“) is an area where the US clearly has a less-than-squeaky-clean track record. So while the exact motivations driving DoD’s interests in oxytocin research are still vague, Moreno cautions that it might be wise for us to assume the worst.

Can brains and machines interact seamlessly? “Brain-machine interfaces” work by converting neural activity into readable electrical activity for external devices, useful for things like prosthetic limbs in human patients. A DARPA project called the Cognitive Technology Threat Warning System—also known as “Luke’s Binoculars,” a Star Wars reference aptly dissected by the tech geeks at Wired—aimed to develop powerful binoculars that “convert subconscious, neurological responses to danger into consciously available information.” Using electroencephalography (EEG), which picks up on electrical activity along the scalp, the binoculars could supposedly pick up subconscious neural activity to make soldiers aware of potential threats or targets faster than they would otherwise receive the information.

What’s more troubling comes one level higher than linear brain-computer interactions. In the more involved “brain-machine-brain” interface, a device receiving neural input can feed back to alter brain activity—for example (in a wholesome, non-warzone application), prosthetic limbs that can also relay touch information back to the wearer’s brain. And according to a 2009 NRC report on neuroscience applications in the military, one of their “medium-term” goals would be to develop in-helmet magnetic stimulation to suppress “unwanted brain signals” or enhance “desirable brain networks.”

Though the authors stress that this will be a low-priority investment until more definitive research emerges, Moreno argues that the increasing trend is towards remote control. Citing a 2007 experiment where a monkey in North Carolina was taught to operate a walking robot in Japan using only its neuronal activation patterns, Moreno writes, “It’s easy to imagine a new phase of warfare in which ground troops become obsolete.”

Harder, better, faster, and sleep-deprived? Most people are familiar with “go pills” and “no-go pills”—amphetamines and tranquilizers—long used by fighter pilots to beat fatigue in the cockpit. The “go pills” received major media attention in 2003, when two American pilots stationed in Afghanistan claimed that the meds clouded their judgment and caused them to mistakenly bomb allied forces, leading to the deaths of four Canadian soldiers. In 2008, a report for the US Army looked into another drug—a narcolepsy med called modafinil—and compared it to college student cognitive enhancement favorite, Ritalin. Reports concluded that modafinil outperforms on cognitive enhancement tests, “especially on people undergoing sleep deprivation.” Other reports with modafinil had tested its efficacy on simulated flight performance of pilots sleep-deprived for as many as 40 hours at a time.

While combating fatigue and increasing mental acuity is one major arm of research, another end of cognitive enhancement experiments are trying to figure out ways to selectively decrease mental function—namely, in order to help “erase” the crippling pain associated with traumatic memories. In 2008, experiments conducted with the drug propranolol on patients with anxiety disorders like PTSD, showed promising evidence that taking the drug and then forcing a detailed run-through of the traumatic events significantly reduced patients’ strong emotional reactions to the memory. The memories were still there, but they were sufficiently altered as to be bearable.

While such cognitive enhancement treatments may seem promising and to some degree necessary, Moreno cautions that servicemembers could have very little say in the matter. “Soldiers are required to accept medical interventions that make them fit for duty,” he says. “If a warfighter is allowed no autonomous freedom to accept or decline an enhancement intervention…then the ethical implications are immense.” The military stresses that pilots are offered the option, for example, of taking the “go pills” after a certain number of hours of sustained unrest, but pilots who decline the pills are axed from their missions for essentially putting their own lives in danger. This adds yet another emotional pressure to soldiers already overburdened by all their minds are forced to handle.